WHY AI RISK ASSESSMENT IS NOW A BOARD-LEVEL REQUIREMENT

AI now sits at the center of business decisions. It shapes pricing, hiring, fraud detection, and customer experience. That shift brings speed and scale, but it also brings risk that many teams still struggle to control. As a result, organizations now depend on structured AI risk assessment to track how these systems behave in real conditions.

In the past year, leaders have faced hard lessons. Regulators have stepped in with clear expectations, especially under frameworks like the EU AI Act. At the same time, real incidents have exposed weak controls. Biased models have led to unfair decisions. Sensitive data has leaked through AI tools. Some systems have produced inaccurate outputs that reached customers and damaged trust. These are not edge cases anymore. They show up in audits, legal reviews, and board discussions, which is why ongoing AI risk assessment is now part of governance.

Boards now carry direct accountability for how AI impacts the business. They ask simple but critical questions. Can we trust our AI outputs? Can we explain decisions under scrutiny? Do we have proof that risks are under control? These questions often lead teams to adopt formal AI risk assessment practices for better visibility.

AI risk assessment gives a clear path forward. It is a structured process to identify, evaluate, and reduce risks linked to AI systems across their lifecycle. In addition, a consistent AI risk assessment approach helps align technical controls with compliance and audit expectations.

This matters because AI risk now affects revenue, compliance, and reputation at the same time. A weak control in one model can trigger financial loss or regulatory action.

This blog will help you understand why this shift has happened, what boards expect today, and how your organization can build a practical and defensible AI risk approach.

Tl; DR:

Concern: AI systems now drive pricing, hiring, fraud detection, and customer interactions. Weak controls lead to biased decisions, data leaks, and inaccurate outputs. These failures trigger regulatory penalties, financial loss, and reputational damage. Boards face direct accountability and must answer how AI decisions are validated, monitored, and controlled.

Overview: AI risk assessment is a lifecycle process that identifies, classifies, and manages risks across data, models, and decision outputs. It connects technical performance with compliance requirements such as the EU AI Act, GDPR, and ISO standards. Organizations use it to map AI systems, detect model drift, track bias, and produce audit-ready documentation. This creates a single, consistent view of AI risk across the business.

Solution: Adopt a structured AI risk framework with clear governance, system classification, and continuous monitoring. Maintain detailed documentation, enforce controls like bias testing and model validation, and integrate AI risk into enterprise risk management. Use independent audits and certifications such as ISO 42001 to validate controls and demonstrate compliance. This approach delivers measurable risk reduction, audit readiness, and board-level confidence.

WHAT IS AI RISK ASSESSMENT AND WHY IT MATTERS IN 2026

AI risk assessment is the process of identifying, classifying, and managing risks that emerge across the lifecycle of an AI system, from the data it trains on to the decisions it makes in production. In practice, a structured AI risk assessment helps teams map how data, models, and decisions connect across real environments.

Most organizations got comfortable with AI during the pilot phase. Controlled environments, limited users, low stakes. That era is over. AI now runs credit decisions, flags fraud, filters job applications, and drives customer interactions at scale. The margin for undetected failure is thin. As adoption grows, teams rely on ongoing AI risk assessment to track how models behave under real-world pressure.

So what actually goes wrong?

- Data gets leaked through poorly governed inputs.

- Models drift from their original behavior after months in production.

- Automated decisions carry bias that nobody audited.

- A single regulatory misstep can trigger penalties that dwarf the cost of any AI project.

And when customers find out? Trust erodes fast, and it rarely comes back quietly.

The uncomfortable truth here is that most AI failures are quietly expanding risk exposures. For instance, a recommendation model that is slowly skewing its outputs. Or a classification system producing subtly biased results. Without structured risk assessment, these issues compound before anyone notices. This is where a consistent AI risk assessment framework brings early visibility into hidden risks.

Regulators have noticed. The EU AI Act, SEC guidance, and sector-specific frameworks now require documented risk controls rather than informal assurances. As a result, organizations now align compliance efforts with formal AI risk assessment processes that produce clear, audit-ready evidence.

AI risk assessment gives organizations a clear and consistent way to identify issues before they become incidents. It covers data integrity, model behavior, operational dependencies, compliance exposure, and reputational impact in one connected view.

In 2026, it’s a core part of responsible and ethical AI management.

WHY AI RISK ASSESSMENT HAS ESCALATED INTO BOARDROOM LEVEL

Let’s understand this with a scenario. Consider that you are a SaaS founder who feels confident about your AI rollout. Your team had built a smart pricing model. It is working fast and looks clean. However, a client flags strange price swings. Within weeks, the issue reached legal and financial levels. That moment will help you realize that AI has moved from a tech topic to a boardroom concern, which makes an early AI risk assessment critical.

AI now drives key business actions, such as setting prices, filtering leads, flagging fraud, and shaping user journeys. Each output affects revenue, trust, and compliance. That makes AI risk a business risk. Leaders feel it in quarterly numbers and customer feedback. As a result, companies now connect performance monitoring with ongoing AI risk assessment practices.

Board accountability has grown fast. To elaborate, regulators expect clear answers, investors want visibility into AI development lifecycle, and security teams now track AI risks along with cyber threats. Hence, a weak model can expose sensitive data or create biased outcomes. That creates both security and compliance issues in one shot.

Real-world failures have pushed this shift. Companies have paid fines after flawed AI decisions. Some faced public criticism due to biased outputs. Others dealt with system errors that disrupted their entire operations. These events spread quickly and damage brand value, which is why firms now rely on structured AI risk assessment before scaling models.

Boards now focus on two simple questions. Can we trust our AI decisions? Can we explain them during an audit?

These questions drive a new mindset. AI needs the same oversight as finance and security. Clear controls, strong validation, and audit-ready records now sit on the board agenda. This is where serious companies draw the line between growth and risk.

REGULATORY AND COMPLIANCE PRESSURE DURING AI RISK ASSESSMENT

AI risk assessment has moved from discussion to enforcement. In 2026, companies face clear rules on how they build and use AI. This shift puts pressure on teams that already struggle with speed, scale, and control.

Global Regulatory Landscape

The EU AI Act leads this change. It classifies AI systems by risk level. High-risk systems, such as those used in hiring or credit scoring, need strict controls. These include testing, human oversight, and detailed records. At the same time, GDPR still applies. Any AI system that uses personal data must follow rules on consent, purpose, and data protection. Regulators now connect these laws. They expect companies to treat AI risk assessment as important as a core part of enterprise data and security measures.

Sector rules add another layer. In finance, AI decisions must stay fair and explainable. In healthcare, patient safety and data accuracy come first. These rules create real pressure. Teams must prove that their AI systems work as intended and do not cause harm.

Compliance Requirements

Compliance now depends on three core actions. Companies must classify AI systems by risk. They must maintain clear documentation and audit trails. They must also explain how AI models make decisions. This is where many teams struggle. Black-box models create gaps during audits.

Connection with Existing Frameworks

Existing standards help bring structure to AI risk assessment. ISO 27001 supports data security. ISO 42001 focuses on AI governance. SOC 2 builds trust through control validation. Together, they create a practical foundation for AI risk assessment.

Regulators now expect a simple outcome. Every AI system should have a defined risk level and documented controls, especially for high-risk use cases.

CORE COMPONENTS OF EFFECTIVE AI RISK MANAGEMENT FRAMEWORK

In this section, let’s learn about the key components of an effective AI risk management framework.

AI Inventory and Classification

Most teams don’t have a clear view of where AI runs inside the business. That creates blind spots during operations. Therefore, a structured AI risk assessment starts by listing every AI system in use, including third-party tools, APIs, and internal models.

Then classify each system by risk level. To clarify, a chatbot handling FAQs carries low risk. A model approving loans or screening candidates carries a high risk. This step helps you focus your effort where failure would hurt most. It also prepares you for regulatory reviews that expect clear system mapping.

Risk Identification and Analysis

AI risk hides in three places. Inside your data, models, and decisions.

- Look at data inputs. Check for bias, poor quality, or sensitive data exposure.

- Study model behavior. Watch for drift, instability, or unexpected outputs.

- Review decisions made by AI. Ask how they impact users, revenue, and compliance.

- A consistent AI risk assessment process connects these checks and brings clarity across systems.

Control Implementation

Controls turn insight into action. Here, bias testing helps detect unfair outcomes early. Model validation checks if outputs match business logic. Access controls limit who can change models or data, and monitoring systems track performance in real time.

Strong security controls give teams confidence and create proof during audits. They also support a reliable AI risk assessment by showing how risks are actively managed.

Continuous Monitoring

AI changes over time. In simple words, data shifts, user behavior evolves, and models degrade.

Thus, track model drift and flag anomalies quickly. Furthermore, maintain detailed logs of system activity. These logs support investigations and audits. Over time, continuous monitoring strengthens your AI risk assessment and keeps systems aligned with real-world conditions.

Governance and Reporting

Clear ownership keeps accountability sharp. Assign roles to risk leaders and security teams.

Moreover, build simple dashboards for the board. Show risk levels, incidents, and control status. Leaders need visibility to make informed decisions.

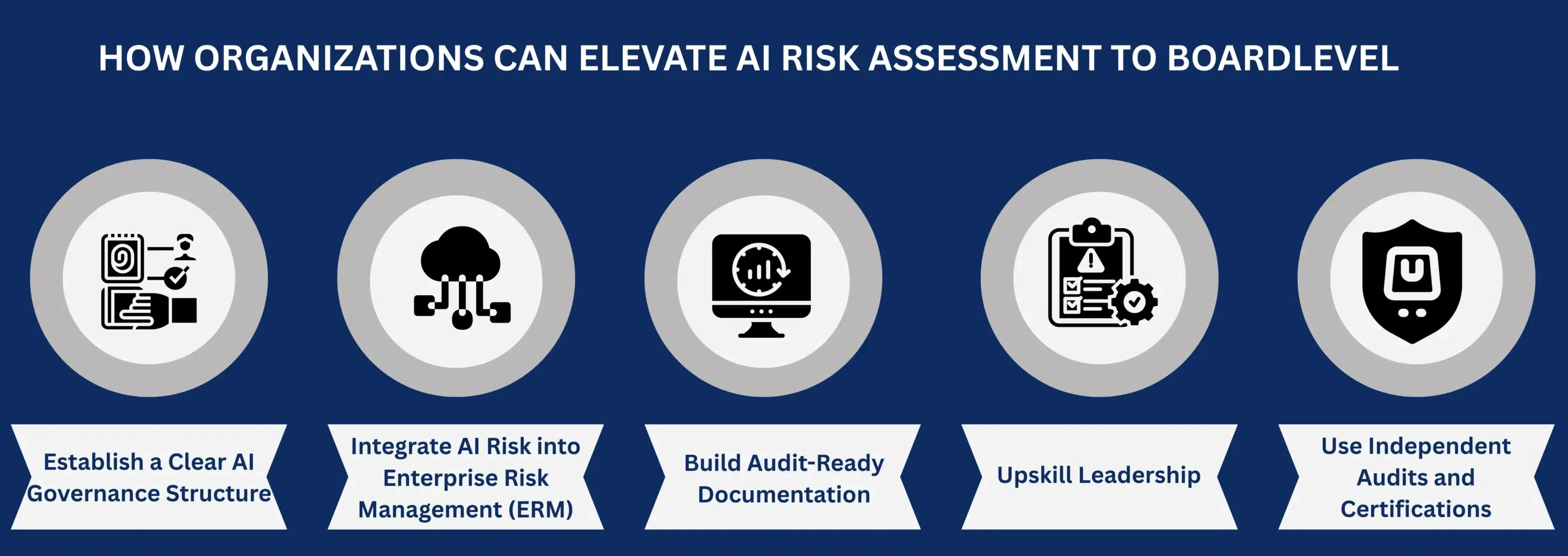

HOW ORGANIZATIONS CAN ELEVATE AI RISK ASSESSMENT TO BOARDLEVEL

Building an enterprise level AI risk assessment model demands clear structure and leadership involvement. In this section, let’s explore the key steps involved in developing one.

Establish a Clear AI Governance Structure

AI risk often falls between teams. That creates confusion and slow responses. Therefore, set up a cross-functional group with leaders from security, legal, data, and product. Give this group clear authority.

Furthermore, define how information flows to the board. When ownership is clear, decisions move faster, and gaps shrink.

Integrate AI Risk into Enterprise Risk Management (ERM)

Many companies track financial and operational risks in one place. AI risk should sit in the same system. Add AI risk use cases to your risk register. Link each system to business impact.

Build Audit-Ready Documentation

Audit pressure has increased. Regulators and clients ask for real evidence rather than verbal assurance. Maintain records of risk assessments, testing results, and control checks.

Keep incident logs as well. If an AI system fails, document the incident response procedures. This level of detail builds trust during audits and client reviews.

Upskill Leadership

Board members need a working knowledge of AI risks. They don’t need to code, but they must understand how decisions get made.

In this case, short workshops could help. Use real examples from your own systems. Show where things can go wrong and how controls reduce that risk. This builds confidence and sharper oversight during AI risk management.

Use Independent Audits and Certifications

External validation adds credibility. Independent audits test your controls and highlight blind spots. Accordingly, certifications aligned with AI and security standards support compliance efforts.

Investors and enterprise clients look for this signal to prove that your AI systems meet accepted risk and AI governance practices.

CONCLUSION

AI now drives decisions that shape revenue, compliance, and customer trust. That reality puts pressure on leadership to prove that these systems work as intended. Verbal assurances no longer hold up in audits or investor discussions. You need evidence, clarity, and control.

This is where working with an independent audit firm makes a real difference.

CertPro CPA LLC evaluates your AI systems through a structured, evidence-based approach. We focus on what regulators and boards expect to see. Clear risk classification, documented controls, and verifiable outcomes.

For businesses using AI at scale, ISO 42001 certification adds another layer of trust.

CertPro conducts independent ISO 42001 assessments and certification for audit-ready organizations. We evaluate your AI Management System using evidence-based testing of policies, controls, and governance practices. Based on this conformity assessment, we issue certification that reflects actual alignment with the standard. This independent validation strengthens trust with clients, regulators, and investors.

If you are unclear about your AI management system and policies, connect with us to get it independently assessed based on standard audit procedures.

FAQ

What is AI risk assessment?

AI risk assessment is the process of identifying, classifying, and reducing risks across an AI system’s lifecycle. It covers data integrity, model behavior, compliance exposure, and decision outputs to help organizations catch and control problems before they impact business outcomes.

What are the key risks that AI risk assessment covers?

AI risk assessment covers five core risk areas: data privacy and leakage, model bias and drift, operational errors from automation, regulatory non-compliance, and reputational damage. Each category directly affects business performance and must be actively monitored and controlled.

What regulatory frameworks require AI risk assessment?

The EU AI Act, NIST AI RMF, ISO 42001, and sector-specific rules in finance and healthcare all expect documented AI risk controls. Regulators now require companies to classify AI systems by risk level and maintain clear documentation and audit trails for any high-risk use case.

What role does AI risk assessment play in ISO 42001 certification?

ISO 42001 is the international standard for AI management systems. An AI risk assessment provides the documented evidence needed to demonstrate that an organization’s controls are active, tested, and aligned with the standard’s requirements for governance, transparency, and accountability across AI systems.

What is the difference between AI risk assessment and traditional IT risk assessment?

Traditional IT risk assessment focuses on systems and infrastructure. AI risk assessment also evaluates model behavior, training data quality, output fairness, and decision transparency.

HOW SOC 2 COMPLIANCE SOFTWARE CHANGES AUDIT READINESS

There's a version of SOC 2 preparation that most security teams know too well. The audit date is approaching. Someone sends a spreadsheet asking for access logs, vendor assessments, and approval records. People scramble. Documentation gaps appear. What should take...

HOW SOC 2 TYPE II CERTIFICATION IMPACTS CUSTOMER CONFIDENCE AND DATA SECURITY

Enterprise buyers changed how they evaluate vendors. They no longer trust self-reported security claims. Instead, vendor risk management became a top priority. Consequently, procurement teams demand independent proof. They need verification that vendors protect their...

SOC 1 VS SOC 2: WHICH REPORT YOUR CUSTOMERS ACTUALLY ASK FOR

If you sell SaaS or provide outsourced services, you have likely been asked for a SOC report. However, the follow-up question is rarely easy to answer: do they mean SOC 1 or SOC 2? Both reports fall under the AICPA’s System and Organization Controls (SOC) reporting...