A recent McKinsey survey states that more than 78 percent of businesses are using AI in one or more core business functions in their organization (Source). This proves that AI has transformed into an inevitable element of the modern business world. The boardroom question is now, “How do we become an organization that AI amplifies rather than undermines?” This is precisely where AI compliance comes into play. With the rise of AI technology, there is an excitement in the market for increased efficiency and scalability. But there is also some fear that arises because of its unexplainable and unclear nature.

Accordingly, this revolutionary technology also comes with its own kind of risks, such as algorithm bias and unclear accountability and privacy concerns, that could change your quest for innovation into liability with a single mishap. Additionally, global regulators are on the watch, and investors are questioning your ability to manage it. Even a small security incident will break your long-built trust. Therefore, AI compliance is no longer optional but a survival strategy. This brings us to the global AI governance risk and compliance framework known as ISO 42001.

The ISO/IEC 42001 is the world’s first AI management system standard that was launched in December 2023. Unlike other guidelines, this standard provides businesses an intentional and structured way to manage AI responsibly. Furthermore, it focuses on your boardroom priorities of governance, transparency, and continuous improvement. This regulatory compliance AI is necessary because unmanaged AI will ultimately lead to operational risks and halt your business growth. Moreover, it could invite lawsuits, damage your reputation, and erode the stakeholders’ trust. Hence, this blog will guide you through how ISO 42001 and AI compliance matter. Further, it streamlines your risk management process and helps in achieving operational excellence. With AI governance risk and compliance procedures, you could keep your desire for innovation alive and use AI responsibly and scale ethically.

Tl; DR:

Concern: AI is everywhere; 78% of businesses already use it in core operations. But with innovation comes risk: algorithm bias, security breaches, privacy violations, and unclear accountability. One mistake can destroy trust, trigger lawsuits, and damage growth. Global regulators and investors are watching closely, so AI compliance isn’t optional anymore; it’s survival.

Overview: ISO/IEC 42001 is the world’s first AI Management System Standard (AIMS). Unlike guidelines, it offers a structured, certifiable framework for responsible AI governance. It covers leadership, planning, operations, risk assessment, Annex A controls (like transparency and bias checks), and Annex B guidance for practical implementation. It integrates with existing risk systems (like ISO 31000) and addresses AI-specific risks like bias, security gaps, privacy issues, and hallucinations.

Solution: Adopting ISO 42001 makes your AI trustworthy, auditable, and future-proof. It helps you scale AI responsibly, meet compliance expectations, and build stakeholder confidence. Delaying adoption only raises the cost of risk. CertPro helps businesses implement ISO 42001 efficiently—without slowing down innovation. From risk assessments to certification, we make AI compliance practical, tailored, and aligned with your growth goals.

WHAT IS AI COMPLIANCE?

AI compliance is a process of managing your AI systems and tools according to the legal, ethical, technical, and governance standards. This process is not just about following the laws. Rather, it’s about building trust and accountability. For instance, imagine your AI makes a biased decision or exposes personal data. That damage doesn’t just take a toll on your reputation; moreover, it breaks customer trust and creates operational chaos. Hence, AI compliance must be a vital part of your growth strategy from the initial stage of AI design and adoption.

In this context, the ISO 42001 AI Management System (AIMS) works as a blueprint for managing AI responsibly. To add on, it is not a list of best practices. Instead, it gives you a system and structure to use AI ethically and responsibly. ISO 42001 regulatory compliance AI helps organizations to set clear policies, apply the right control measures, and monitor AI risks continuously. You might be wondering about the relevance of existing frameworks, such as the EU AI Act or the NIST AI Risk Management Framework. Of course, they’re important, but they serve a different purpose. The EU AI Act is a regulation that focuses on managing AI according to its risk levels and impact, while the NIST AI RMF offers guidance for understanding those risks. But neither offers a certifiable structure.

But ISO 42001 fills that gap. It’s a globally recognized, auditable, and certifiable standard designed for organizations that want compliance and proof of responsibility. For businesses under pressure to innovate without breaking trust, this matters. ISO 42001 gives you a way to scale AI safely and meet regulatory expectations. Plus, it proves to your stakeholders that your AI is not a black box and it is accountable, monitored, and built on strong governance.

CORE REQUIREMENTS OF ISO 42001 FOR RISK-DRIVEN AI COMPLIANCE

ISO 42001 is a complete management system designed to keep your AI safe, fair, and accountable. The primary focus is to develop a risk-based approach to managing AI, as risks are no longer static. This is because risks evolve with every new model, dataset, and update. Notably, the standard’s structure covers six key areas: leadership, planning, support, operations, performance evaluation, and continual improvement.

- Leadership isn’t just about signing policies; it’s about ownership. Who’s accountable when an AI system fails?

- Planning ensures risks are identified early.

- Support covers resources and skills because compliance fails when teams aren’t trained.

- Operations guide the day-to-day handling of AI systems.

- Performance evaluation checks if your controls work.

- Finally, continual improvement ensures you’re not locked into yesterday’s approach.

Another core requirement of the AI compliance process is implementing the appropriate Annex A controls. It lists specific controls, like transparency measures, bias detection, and human oversight. These controls are essential and serve as a safeguard against costly errors. Similarly, Annex B provides implementation guidance, turning complex requirements into practical steps. For example, it explains how to document your AI lifecycle so auditors and regulators see accountability, not chaos.

This process is necessary because a mismanaged or flawed model can trigger lawsuits, regulatory fines, or a viral PR disaster. ISO 42001 requires risk assessment, mitigation, and lifecycle oversight at all stages. Namely, from design, deployment, and beyond. This means you’re not just reactive; you’re proactive, preventing issues before they escalate.

HOW ISO 42001 AND AI COMPLIANCE BOOST YOUR RISK MANAGEMENT STRATEGY

The opportunities that AI delivers for a business are unprecedented. AI is not just a tool; it is changing your organization’s core. But this recalibration comes with its risks and uncertainties. The list includes bias in algorithms, privacy breaches, and security flaws that can damage trust faster than any innovation can save it. Therefore, rather than allowing AI to process in isolation, it is always better to integrate it with your broader risk management strategy.

AI compliance with ISO 42001 makes such integration possible. It aligns with ISO 31000 principles, which many organizations already use for enterprise risk management. This means you’re not reinventing the wheel. Instead, you’re extending existing risk practices to cover AI-specific challenges. It fits neatly into the governance, risk, and compliance (GRC) frameworks businesses rely on. But ISO 42001 goes one step further. It weaves in ethical AI principles, such as fairness, transparency, and accountability, to build a system people can trust. For example, the standard requires documentation of decision-making logic. So when someone asks, “Why did the AI reject this loan?” you’ll have an answer and evidence. Another strength lies in detection. ISO 42001 drives risk identification across the entire AI lifecycle, from design to deployment to ongoing monitoring. It helps you flag bias, detect security gaps, manage privacy risks, and even control “hallucinations” in generative AI.

When you adopt regulatory compliance AI with ISO 42001, risk management becomes a structured, continuous process that protects your organization while boosting innovation. That’s the difference between surviving AI disruptions and leading the change.

KEY STEPS TO FOLLOW IN BUILDING AI COMPLIANCE WITH ISO 42001

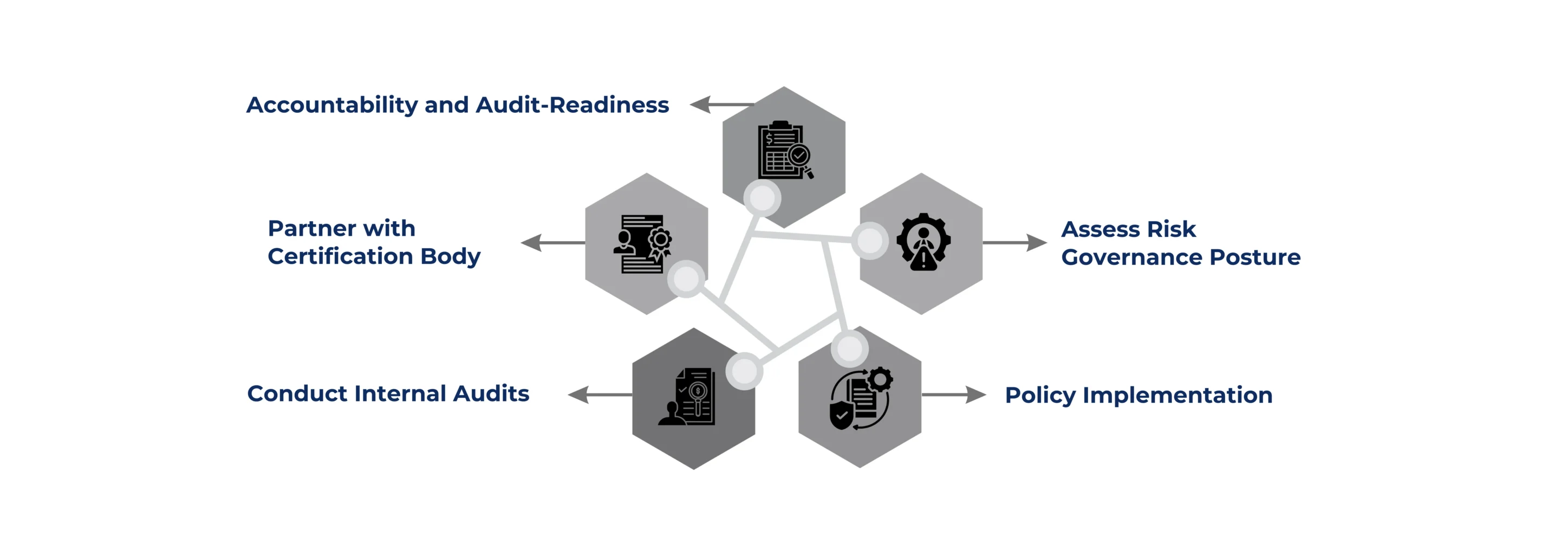

Building an effective roadmap for AI compliance with ISO 42001 requires a clear and structured plan. Therefore, the following steps offer a clear plan for businesses to kickstart their AI compliance journey.

Defining an AI strategy and Scope: This phase means identifying which AI systems, processes, and teams will be part of the compliance framework. It is important to set clear goals for ethical use, risk management, and operational efficiency.

Assess Risk Governance Posture: This involves reviewing existing policies, controls, and monitoring practices. The aim is to identify gaps between your current state and the requirements of ISO 42001. This compliance gap assessment helps you understand where you need to improve before certification.

Policy Implementation: After the assessment, implement policies, controls, and training across teams. Policies should cover data handling, transparency, bias prevention, and accountability. Controls should ensure ongoing compliance and security. Training is vital so employees understand their responsibilities in AI governance.

Conduct Internal Audits: The next step is to conduct internal audits and readiness assessments. These help confirm that your processes meet ISO 42001 standards. They also prepare you for the formal certification audit.

Partner with Audit Firm: Once ready, partner with a well-reputed audit firm like CertPro for official audit and assessment services.

Additionally, using the right tools and partnerships can make the process easier. As a result, these Governance, Risk, and Compliance (GRC) platforms help track policies and risks, and Cloud platforms like AWS and Microsoft offer AI governance features. Partnering with expert ISO 42001 consultants like us can speed up your audit-readiness and reduce mistakes. Following this roadmap ensures you meet AI compliance and turn your AI governance into a long-term advantage rather than a one-time task.

CONCLUSION

AI is no longer an experiment. It is powering critical business decisions, shaping customer experiences, and driving revenue. But with that power comes serious risk. A single AI failure, like a bias, a security breach, or a privacy violation, can lead to lawsuits, reputational damage, and investor mistrust. Delaying AI compliance and ISO 42001 adoption doesn’t save money. It multiplies the cost of risk. Hence, businesses must realize that the price of a regulatory fine or data breach is far higher than building a strong AI governance system now. This is especially true for startups and fast-growing businesses that rely heavily on AI for scale.

This is where CertPro becomes your strategic ISO 42001 assessment and certification partner for audit-ready clients. We specialize in evaluating and certifying your AI management system against global ISO 42001 standards. Our process is practical, efficient, and focused on aligning certification with your business goals. Our team understands the unique challenges startups and enterprises face: limited resources, tight deadlines, and high innovation pressure. Therefore, we help you design a compliance framework that keeps your AI ethical, secure, and trustworthy. We combine global expertise with industry experience to ensure your systems meet international standards and AI ethics to inspire stakeholder trust.

The question isn’t whether you can afford ISO 42001. It’s whether you can afford the cost of inaction. So, if you want to build AI responsibly, scale ethically, and stay ahead of regulations, this is the time to take action. Connect with CertPro today and start your ISO 42001 journey. Let’s make your AI trustworthy and future-proof.

FAQ

How to be AI compliant?

To be AI compliant, organizations need to follow legal, ethical, and technical rules by creating policies, managing risks, being transparent, and regularly checking their AI systems, often using guidelines like ISO 42001 or the EU AI Act.

What are the compliance standards for AI?

AI compliance standards and frameworks include ISO/IEC 42001:2023 (certifiable AI management system standard), the EU AI Act (binding regulation), and the NIST AI RMF (voluntary risk management framework). These guide governance, fairness, transparency, and accountability practices.

What are the compliance concerns with AI?

Compliance concerns with AI include algorithmic bias, data privacy risks, lack of transparency, security vulnerabilities, accountability gaps, intellectual property issues, and non-compliance with evolving global regulations and industry-specific requirements.

What is AI ethics?

AI ethics refers to principles ensuring that artificial intelligence operates responsibly by promoting fairness, transparency, accountability, safety, respect for human rights, and human oversight throughout its use.

What are the key principles of ethical AI?

The key principles of ethical AI include fairness, transparency, accountability, privacy protection, safety, explainability, human oversight, and sustainability to ensure responsible development and trustworthy use of AI systems.

About the Author

RAGHURAM S

Raghuram S, Regional Manager in the United Kingdom, is a technical consulting expert with a focus on compliance and auditing. His profound understanding of technical landscapes contributes to innovative solutions that meet international standards.