LAST UPDATE — 08-20-2025

Artificial intelligence is now at the center of global regulation. Recently, Forbes has stated that the global CEOs are treating AI governance as an ethical and regulatory imperative in 2025. This trend is likely to stay and evolve because the AI technologies are making a huge impact across industries and sectors. To elaborate, companies ranging from startups to global enterprises are embedding AI into their core operations. As adoption grows, governments have responded by introducing strict AI regulations to ensure responsible and transparent use.

For example, the EU AI Act, formally adopted in 2024, is the world’s first comprehensive legal framework for AI. Similarly, Canada has proposed a federal law called the Artificial Intelligence and Data Act (AIDA) to regulate the AI systems. Furthermore, the U.S. has the NIST AI Risk Management Framework (RMF), which provides voluntary guidance, while individual states such as Colorado, Illinois, and Utah have enacted their own AI-focused laws. Despite the innovative capabilities and efficiencies, AI technologies also come with some potential risks. These risks include biased algorithms, data privacy, lack of transparency, and an unexplainable working nature. Therefore, to ensure the safe and ethical use of AI, businesses need to adhere to global rules and standards.

In this globalized business world, companies face increased complexity in complying with the multiple AI regulations that arise across jurisdictions. This is where ISO/IEC 42001:2023 has emerged as the bedrock of AI governance, encompassing the fundamentals of an ethical AI standard. It provides structured guidance for businesses to keep up with the evolving global AI practices. Therefore, this blog explores why AI regulations matter, how ISO 42001 supports compliance, and how your organization can use it to build trust and reduce risks.

WHY AI SHOULD BE REGULATED? IMPORTANCE OF AI GOVERNANCE

WHAT IS ISO 42001 AND ITS UNIQUENESS IN THE CURRENT AI REGULATORY LANDSCAPE?

HOW ISO 42001 SUPPORTS COMPLIANCE WITH KEY GLOBAL AI REGULATIONS

BENEFITS OF IMPLEMENTING ISO 42001 FOR COMPLIANCE

BEST PRACTICES FOR ALIGNING ISO 42001 WITH GLOBAL AI COMPLIANCE

Tl; DR:

Concern: AI adoption is accelerating worldwide, but unregulated use creates risks such as bias, privacy violations, and lack of accountability. With the EU AI Act, U.S. state laws, and emerging global AI principles, organizations now face complex compliance challenges.

Overview: ISO/IEC 42001:2023 is the world’s first AI management system standard. Unlike technical regulations, it provides a structured governance framework that aligns with evolving rules, including EU AI Act compliance, by focusing on accountability, transparency, and continuous improvement.

Solution: By adopting ISO 42001, businesses can simplify compliance, reduce regulatory risks, and build trust with stakeholders. With expert guidance from partners like CertPro, organizations can integrate ISO 42001 into their existing systems, stay ahead of global AI regulations, and ensure ethical, responsible AI adoption.

WHAT ARE AI REGULATIONS?

AI regulations are a set of laws, policies, and standards designed to govern the development, design, and use of artificial intelligence. Today, AI systems play a crucial role in decision-making, impacting people’s lives, businesses, and even national security. But, without clear rules, AI can easily go wrong, leading to discrimination, privacy violations, or unsafe outcomes. The main goal of these regulations is to make AI systems safe, fair, and transparent. Moreover, the businesses need to understand the process behind their AI models, the source of the data, and whether the system is biased or not. These rules don’t just protect the users, but they also protect companies from lawsuits, fines, and damaged reputations.

Several major frameworks lead the way for AI governance. In this context, the EU AI Act is the world’s first comprehensive law for AI. Accordingly, achieving EU AI Act compliance ensures your organization can deploy artificial intelligence systems safely across European markets. It classifies systems by risk and puts strict rules on high-risk AI systems. In the U.S., there isn’t a single AI law yet, but there are executive orders and state-level laws like the Colorado AI Act, the Illinois Human Rights Act, and the Utah AI Policy Act. Furthermore, the NIST AI Risk Management Framework guides responsible use. China’s AI regulations emphasize security, social stability, and fairness. On a global scale, the OECD AI Principles push for trustworthy AI practices worldwide.

Therefore, the trend is clear. The regulations are evolving fast and spreading across borders, and businesses can’t afford to ignore them. Aligning early with these AI regulations could help you build trust, avoid risk, and stay competitive in a world where AI is everywhere.

WHY AI SHOULD BE REGULATED? IMPORTANCE OF AI GOVERNANCE

AI is more efficient and it is quicker at delivering results and completing tasks. IT help desks, hiring tools, chat support, and loan approval systems are increasingly utilizing AI systems that can write, code, design, and communicate. But how could you prove that everything AI does is right and ethical? If a data security incident occurs due to AI tools, who will take responsibility from your firm? Moreover, how will organizations ensure that these AI-based decisions are free from bias and discrimination? This is where AI regulations and AI governance gain importance. Now let’s discuss some of the key factors that reinforce the need to follow an ethical AI standard.

Ethical Concerns: The ethical and legal concerns surrounding the use of AI systems are gaining importance every day. Therefore, companies must take strong steps to prevent bias and discrimination in AI models.

Accountability: Indeed, accountability must be a key factor in AI-driven decisions. People must understand who is accountable for addressing AI errors.

Trust: Winning the trust of consumers and key stakeholders is important for scaling business. So, if your AI systems are transparent and understandable, users and investors show more interest in doing business with you.

Risk Mitigation: Mitigating AI-based risks is one of the key areas in AI governance. Organizations must avoid data privacy violations by securing sensitive information and protecting systems from cyberattacks and operational risks.

Aligning With Global Mandates: Similarly, the use of AI must align with global AI regulations and standards. Regulations like the EU AI Act and GDPR demand strict AI governance, and the sector-specific standards set boundaries that companies cannot ignore. Hence, aligning with ISO 42001 can simplify your journey toward EU AI Act compliance by providing a structured AI management framework.

WHAT IS ISO 42001 AND ITS UNIQUENESS IN THE CURRENT AI REGULATORY LANDSCAPE?

Businesses today leverage the power of AI technologies to achieve unimaginable feats. The global leadership is transforming from a skeptical to curious approach towards the use of AI. Instead of fearing that AI will slow down their growth, leaders are now using it to accelerate innovation. Nevertheless, what they lack is structured guidance in developing an AI governance framework to manage the tools and systems in an effective way. In 2023, the International Organization for Standardization introduced the world’s first comprehensive standard for implementing and maintaining an AI management system (AIMS), known as ISO/IEC 42001. The rise of this ethical AI standard represents a solution to the collective need for a well-organized structure to manage AI.

ISO 42001 AI management standard focuses on how organizations govern and manage AI responsibly rather than outlining technical regulations that dictate how an algorithm must function. Furthermore, it works on the principle of the Plan-Do-Check-Act (PDCA) cycle. This means businesses must plan AI objectives, implement controls, monitor results, and improve continuously to ensure a robust AI governance framework. The uniqueness of ISO 42001 is that it follows a management system-based approach. Instead of focusing on just regulatory compliance, the ISO 42001 AI management standard embeds AI into your business strategy and risk frameworks. Plus, it addresses the critical pain points, like governance for accountability, risk management to prevent harmful outcomes, transparency to build trust, and ethical oversight to avoid bias or misuse.

This international standard shows that organizations are committed to creating policies, defining roles, and setting up processes that adapt to the risks of AI. Importantly, to keep up with the changing nature of AI regulations, this standard emphasizes that ongoing improvement is a key part of managing AI ethically.

HOW ISO 42001 SUPPORTS COMPLIANCE WITH KEY GLOBAL AI REGULATIONS

The complexity of AI regulations is increasing rapidly in the current compliance world. For example, the EU AI Act sets strict rules for high-risk AI, requiring risk management and transparency. Article 15 specifically mandates accuracy, robustness, and cybersecurity. While such an approach may seem ideal in theory, many businesses struggle with the challenge of implementing this without becoming overwhelmed by its complexity. This is where ISO 42001 becomes a practical lifeline in guiding businesses in achieving a solid AI governance framework.

ISO 42001 AI management standard gives organizations a structured management system for AI governance. It has outlined procedures, roles, and risk controls so you’re not struggling when regulations change. Additionally, for companies aiming to enter EU markets, this framework includes essential rules such as managing risks, keeping clear records, and having proper supervision. With this form of AI compliance, businesses can build a solid foundation rather than focusing on temporary and ad hoc measures with every change in the AI regulations.

In the U.S., state-level guidance fragments AI governance. China prioritizes security and social stability, and other regions talk about fairness, bias control, and explainability. However, ISO 42001 aligns with these diverse themes by focusing on trustworthiness, bias mitigation, and security as part of your AI lifecycle and management. This international AI management system clearly gives businesses the flexibility and preparedness to adhere to international AI regulations. This allows your business to adapt faster, avoid costly repairs, and reduce compliance risks. Aligning with ISO 42001 is an intentional commitment to build confidence for your customers, regulators, and your team. In a world where trust drives businesses, that confidence can be your biggest competitive edge.

BENEFITS OF IMPLEMENTING ISO 42001 FOR COMPLIANCE

Implementing ISO 42001 AI management standard solves the real challenges that your businesses face while using AI. Today, customers, investors, and regulators want proof that, along with the zeal for innovation, you also have the goal of acting ethically by adhering to AI regulations. Furthermore, ISO 42001 builds that trust and indicates that you’ve got strong AI governance in place.

Another big advantage is the certification. In times where questions regarding AI compliance are growing louder, a formal certificate signals maturity. It tells your stakeholders that you are not experimenting without safeguards. Instead, you adhere to structured and globally recognized practices in compliance with AI regulations. This level of assurance helps you win deals, attract partnerships, and even speed up regulatory approvals. But the real magic lies in its flexibility. To clarify, ISO 42001 is characterized by its adaptability and flexibility. Whether you operate in Europe under GDPR or in regions with new AI acts, the framework scales with you. It doesn’t just prepare you for today; it keeps you future-ready. Plus, it supports continuous improvement. So as global AI regulations and technology evolve, your governance evolves too.

Think of it as building an ethical backbone for AI-driven growth. Without it, you risk compliance penalties, legal headaches, and public backlash. With it, you gain trust, reduce risk, and stay competitive in a landscape where trust is the new currency.

BEST PRACTICES FOR ALIGNING ISO 42001 WITH GLOBAL AI COMPLIANCE

ISO 42001 is a voluntary standard, and that is both its strength and its challenge. It’s not a legal mandate, but regulators worldwide, from the EU AI Act to U.S. state laws and China’s AI governance rules, expect accountability. Therefore, organizations cannot simply copy the standard and expect compliance. They must tailor it to match the AI regulations that apply to them. So how do you make it work? Let’s understand this process with a few best practices.

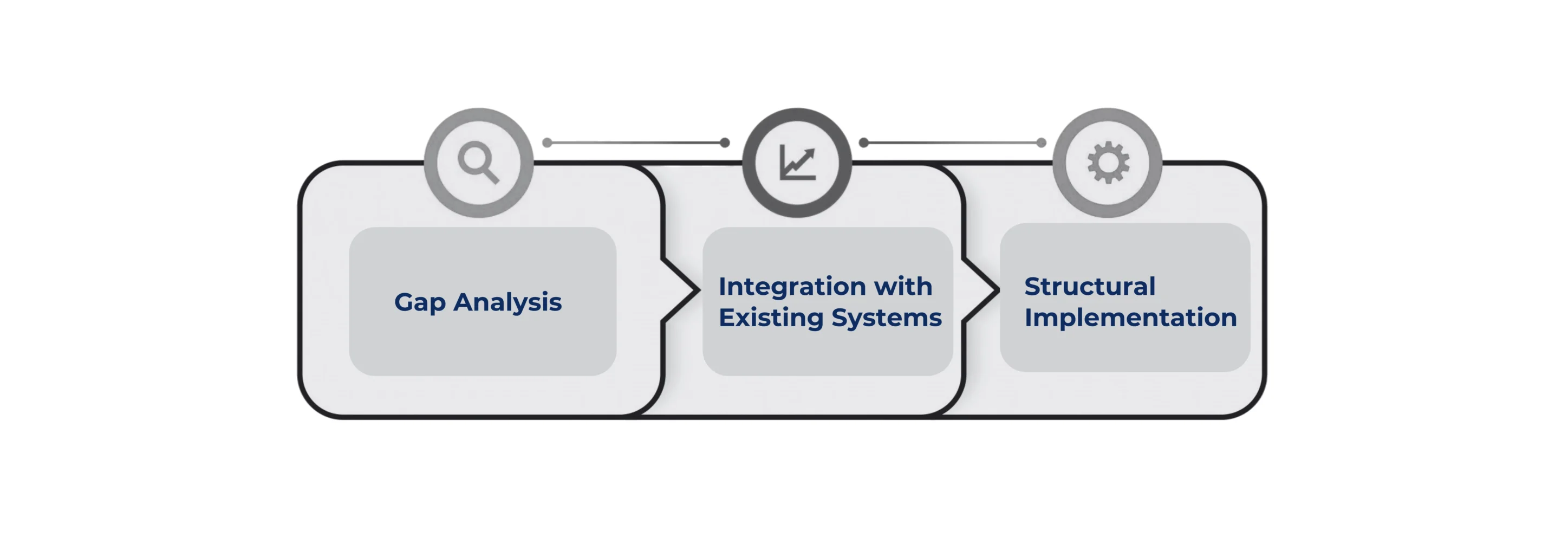

Gap Analysis: This exercise serves as an assessment of your current situation. Compare your current ISO 42001 controls with the requirements of your local AI regulations. For example, the EU might stress human oversight, while U.S. rules lean on transparency. By mapping these gaps, you could avoid blind spots and compliance weaknesses later.

Integration with Existing Systems: Next, integrate ISO 42001 with frameworks you already use. This process helps you to avoid duplicating efforts if you already have an ISMS for ISO 27001, a risk management process, or a quality management system. Therefore, the key is to identify the overlapping controls and use them to achieve multi-standard compliance. Think of it like adding a new gear to an existing engine. For instance, using your current incident response plan to also cover AI-related risks saves time and resources.

Structural Implementation: Now, make sure that your plan is supported by a solid structure. Ensure that your documentation workflows, AI governance roles, and team training are clear. Here, a written AI policy might work wonders, and everyone in your team and the key stakeholders must understand it. This is essential because, without alignment, compliance with AI regulations becomes a liability.

CERTPRO IS YOUR GO TO PARTNER FOR ISO 42001 CERTIFICATION

As global competition for adopting AI intensifies, so does the need for responsible AI governance. The challenge is about ensuring that your AI systems meet ethical, transparent, and compliant standards. And delaying this process will attract regulatory penalties, lawsuits, and costly reputational damage. The ISO/IEC 42001:2023 provides a futuristic framework to keep AI ethical, transparent, and compliant. Therefore, getting ISO 42001 certification is paramount for business success in this era. But you need someone to guide you with proven strategies and best practices.

That’s where CertPro shines as an assessment and certification partner who understands the real-world challenges businesses face. At CertPro, we specialize in ISO 42001 assessment and certification for organizations that are audit-ready. We don’t handle implementation or documentation, but we verify, assess, and certify your AI management systems based on the ISO 42001 standard. Hence, don’t wait until compliance becomes a crisis. CertPro’s ISO 42001 assessment and certification services give you confidence, trust, and a competitive edge in an AI-focused market. Ready to make AI governance your strength? Contact CertPro today and future-proof your business success.

FAQ

What is AI regulatory compliance?

AI regulatory compliance means following laws, standards, and ethical guidelines to ensure AI systems are transparent, fair, safe, and accountable while minimizing bias and legal risks for businesses and users.

What are OECD AI principles?

OECD AI Principles are global guidelines promoting trustworthy AI through fairness, transparency, accountability, human-centric values, and robust security to ensure ethical AI development, deployment, and governance across industries and nations.

What are 5 key pillars of AI ethics?

The five pillars of AI ethics are fairness, transparency, accountability, privacy, and safety. These principles guide responsible AI use, ensuring ethical, unbiased, and trustworthy AI systems in compliance with global standards.

What is the difference between IT governance and AI governance?

IT governance manages overall technology policies and risks, while AI governance specifically focuses on ethical, legal, and transparent AI use, addressing bias, accountability, and compliance with emerging AI regulations and standards.

What is organizational AI governance?

Organizational AI governance is the framework that ensures responsible AI development and deployment within a company. It includes policies, oversight, accountability, risk management, and compliance with ethical and legal AI standards.

About the Author

ANUPAM SAHA

Anupam Saha, an accomplished Audit Team Leader, possesses expertise in implementing and managing standards across diverse domains. Serving as an ISO 27001 Lead Auditor, Anupam spearheads the establishment and optimization of robust information security frameworks.

AUTOMATED DATA PROCESSING FOR COMPLIANCE: STREAMLINING AUDIT EVIDENCE COLLECTION

COMPLIANCE AND GOVERNANCE GUIDE: DATA GOVERNANCE VS AI GOVERNANCE FOR ENTERPRISES

What is Regulatory Change Management?

Learn What Regulatory Change Management Is, Its Importance, and How to Build a Process That Keeps Your Business Compliant and Audit-Ready.