WHO AUDITS THE AUDITOR & WHY AI AUDITING ITSELF NEEDS INDEPENDENT OVERSIGHT?

Recently, Deloitte found itself in the spotlight for all the wrong reasons. The firm later revealed that its AI-generated report for a major government client had skipped key oversight procedures. The Australian Financial Review reported that the firm publicly apologized upon discovering a failure to follow “required governance steps.” That moment served as a stark reminder to the entire auditing community. So, this leaves us with one profound question. If even the auditors responsible for AI can make mistakes, it raises the question of who is truly overseeing their work. Who, then, is accountable for the AI auditing procedures?

Artificial intelligence has quickly become the backbone of modern auditing. To elaborate, from scanning millions of transactions to identifying compliance gaps, AI auditing tools promise accuracy, speed, and transparency. However, the paradox arises when machines or algorithms audit systems, or even each other, as it raises the question of who verifies the accuracy of those audits. Because one flawed audit can create a chain reaction, spreading misinformation, masking bias, or even legitimizing flawed AI decisions.

This matters far beyond headlines. In today’s interconnected economy, an unverified AI auditing process doesn’t just damage your reputation. Moreover, it can trigger regulatory backlash, financial loss, and lasting reputational damage. For businesses bound by compliance frameworks like ISO 27001, ISO 42001, SOC 2, or GDPR, trust is their ticket to big deals and business success. But once that trust breaks, no algorithm can rebuild it. Trust is rebuilt through documented oversight, transparent reporting, and remediation. Algorithms can support this, but accountability remains human

Therefore, this article explores why AI auditing itself now demands independent oversight. We’ll unpack the challenges of auditing algorithms, explore models for transparent governance, and lay out practical guardrails that can restore confidence in the audit process. Because in a world where AI increasingly checks our books, screens our risks, and validates our compliance, it’s time someone checked the checkers.

Tl; DR:

Concern: AI is transforming auditing, but even AI auditors need oversight. Recent cases, like Deloitte’s flawed AI-generated report, show what happens when machines audit without proper human checks: it leads to missed governance steps, bias, and loss of trust.

Overview: AI auditing now plays a crucial role in detecting compliance gaps and risks. However, without transparency and independent review, these tools can create errors that damage credibility and invite regulatory action. Current oversight methods fall short due to black-box models, skill gaps, and unclear global standards.

Solution: The solution lies in independent human oversight and structured frameworks like ISO/IEC 42001:2023, which define clear accountability, continuous monitoring, and transparent governance. By combining AI’s speed with human judgment, businesses can ensure ethical, reliable, and auditable AI operations. Thereby, protecting trust, reputation, and compliance in an AI-driven future.

WHAT IS AI AUDITING?

AI auditing has grown from a niche idea into a critical layer of accountability in today’s tech-driven world. At its core, an AI auditing process examines how artificial intelligence systems behave, make decisions, and align with laws or ethical standards. To begin with, let’s clarify the concepts.

- AI auditing is a process of assessing an AI system/tool itself for risk, compliance, fairness, security, and performance (the AI is the subject of the audit).

- Using AI in audits is applying AI as a technique to perform audit procedures on business processes, controls, or datasets (the business process is the subject; AI is just a tool).

Depending on who performs it, an AI audit can be classified into three types. Below are the short descriptions of those three types of audits:

- First-party audits are done by internal teams, often model-risk or ethics units within the same organization. They know the system best but may face internal pressure to downplay issues.

- Second-party audits come from partners or vendors who review systems as part of a business agreement. They can be practical but sometimes lack objectivity.

- Third-party audits involve external experts or certification bodies. These certification audits bring credibility and independence, though they may struggle with limited access to proprietary data or complex AI models.

Organizations now rely on AI auditing procedures for many reasons. They’re used to check algorithms for bias or fairness, test safety and reliability, ensure regulatory compliance, and assess performance against intended goals.

Furthermore, the promise of AI auditing lies in its potential to build transparency and trust. If performed properly, it helps companies identify hidden risks early, demonstrate accountability to regulators, and prove to customers that their systems act responsibly.

But AI auditing lacks clear global standards, and many audits rely on opaque methods that aren’t easy to verify. Some auditors are pushed to partial or incomplete assessments due to a lack of access and proprietary restrictions. In such a case, the audit might look thorough on paper but miss critical issues hidden deep within the algorithm’s logic.

So in the upcoming section, let’s learn why independent AI oversight is essential for AI auditing procedures.

THE ROLE OF AI IN MODERN AUDITING

AI is reshaping the way audits are done in the ever-changing modern business world. To clarify, traditionally, auditors manually reviewed transactions, reports, and control logs. Now, AI tools and solutions could do that work in real time, across massive volumes of data, and with far fewer errors.

When we talk about AI in auditing, we’re not just talking about automation. It includes everything from internal audits that assess business controls to external regulatory and compliance audits that ensure organizations meet strict standards.

The biggest advantage that an AI auditing process delivers is speed and scale. It can analyze millions of entries within seconds, detect subtle patterns that humans might miss, and flag anomalies before they turn into risks.

In continuous monitoring, AI acts like a watchful assistant that never sleeps, offering early warnings about compliance breaches or suspicious patterns or behaviour. For companies under pressure to meet regulatory deadlines or manage global operations, that kind of insight is invaluable.

But these gains come with challenges. AI systems can inherit bias from their training data, leading to unfair or misleading conclusions. They’re often hard to explain, which makes it tough for auditors to justify decisions to regulators. Moreover, overreliance on AI can also dull human judgment, and poorly secured models may expose sensitive data to cyber threats.

So the goal here is to enhance the auditors’ judgment rather than replace them. When used wisely, AI turns auditing from a backward-looking exercise into a forward-looking strategy that blends machine precision with human ethics and insight.

WHY IS INDEPENDENT OVERSIGHT CRUCIAL FOR AI AUDITING SYSTEMS?

AI can analyze huge amounts of data and find risks in seconds. But speed isn’t the same as judgment. Because machines don’t understand ethics, intent, or context. AI scales detection, but judgment, accountability, and context remain human responsibilities. That’s why human oversight matters. It brings accountability and fairness to AI auditing.

Humans make sure audit results are clear, correct, and compliant. Additionally, global standards like ISO/IEC 42001:2023 and the EU AI Act both say human-in-the-loop governance is essential for high-risk AI systems. In simple terms, AI can help, but people must stay responsible.

When left alone, AI systems can repeat or even worsen hidden bias. For example, a fraud detection model might wrongly flag normal transactions or miss real fraud due to bad data. This is where independent human reviewers add real value. They check AI outputs, test for bias, and confirm that audits meet ethical and legal rules. Accordingly, their oversight prevents small errors from turning into major compliance failures.

This approach also matches global standards. To clarify, ISO/IEC 42001 requires clear accountability, traceable decisions, and well-documented human roles. Likewise, regulators like the EU AI Office and PCAOB expect audit firms to demonstrate accountability and evidence for significant judgments; when AI tools are used, firms should document human review and responsibility.

Ethical oversight of AI makes sure audit results are defensible and fair during reviews or legal checks. It fills the judgment gap that machines lack and ensures audits are both honest and explainable.

The future lies in hybrid auditing models. These combine AI’s speed with human insight. Frameworks such as ISO 42001, SOC 2, and ISO 27001 already support this balanced method. When humans and AI work together, audits become more reliable, transparent, and trustworthy.

WHY CURRENT OVERSIGHT IS INSUFFICIENT FOR AI AUDITING SPACE

Yes, the AI auditors sound futuristic, but the systems meant to check the auditors are incomprehensible. One big problem is transparency. This implies that many AI tools function as opaque systems referred to as “black boxes,” with no one truly understanding their inner workings. It’s like trying to grade an exam when the answers are written in invisible ink. Without Despite having clear “white-box” access, even skilled auditors struggle to explain or justify the decisions made by a model.

Then there’s the standards mess. Everyone talks about algorithmic audits, but no one agrees on what that actually means. This is due to the absence of consistent metrics, benchmarks, or audit methods. It’s like every player in a soccer match using their rulebook. I’ve seen audit teams debate for hours over whether fairness should mean equal outcomes or equal opportunity, and that confusion hurts credibility.

More importantly, the lack of necessary skills makes the situation even worse. Most auditors weren’t trained in machine learning, data science, or cybersecurity. They know compliance, but not code. To build upon this, there is the bias of self-auditing, when companies assess their systems, and you risk what we call “audit washing,” where reports look clean but miss real issues.

Finally, some regulations crawl while AI sprints ahead. Until relevant regulations are in place, the process of AI oversight will feel like patching a leaky boat mid-storm. So, if you are reviewing the AI system itself, then the following section will guide you through ensuring fair and ethical AI management.

BEST PRACTICES FOR REVIEWING AI AUDITING SYSTEMS

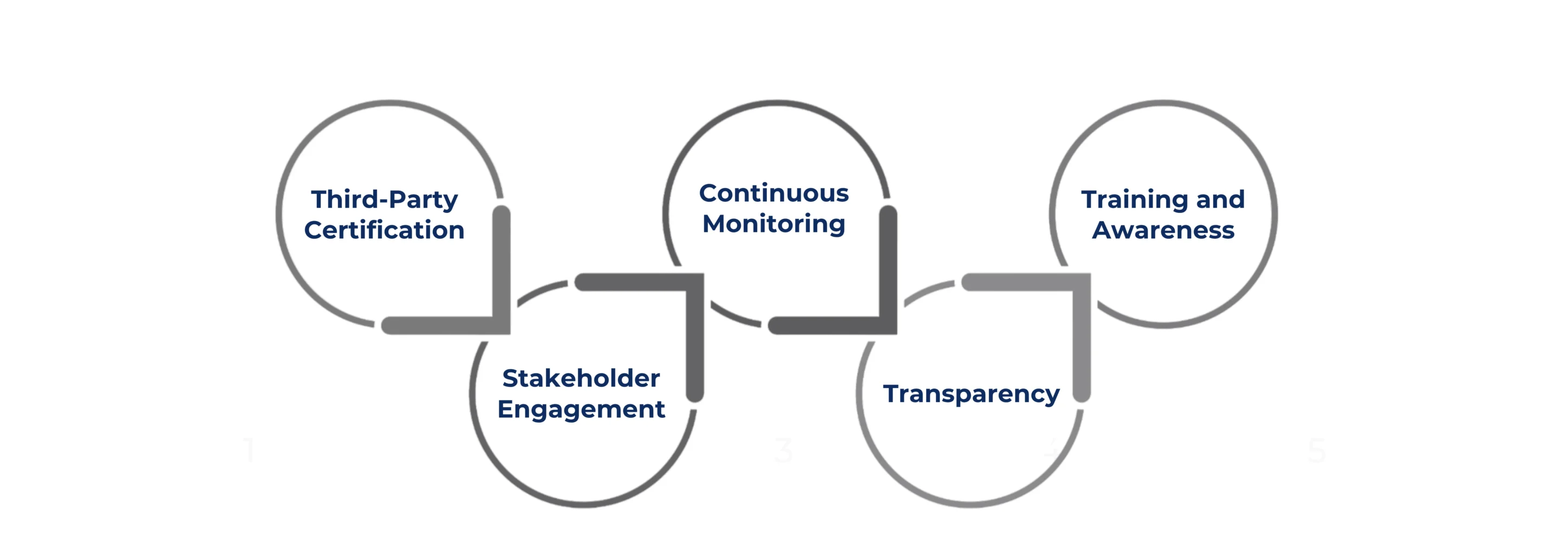

AI is revolutionizing the real-time auditing process. Yet there lies a hidden risk. If a faulty AI system audits itself, errors could go unnoticed. Therefore, independent human oversight is now essential. Let’s learn about some of the best practices for ensuring fair and accountable AI systems

Third-Party Certification: One strong approach is independent third-party certification. Here a neutral expert checks AI tools for fairness and accuracy. This builds trust with clients and regulators.

Stakeholder Engagement: Stakeholders must also be involved in the process. People affected by AI decisions must share their views. Their input helps auditors see problems that machines might miss.

Continuous Monitoring: Monitoring must be continuous. Accordingly, use tools to track AI performance over time. They catch bias, mistakes, and drift early. This process keeps audits reliable.

Transparency: Transparency is key for performing AI oversight. Therefore, the audit reports should be clear and organizations should share findings, errors, and accuracy measures. Such information shows accountability and builds confidence.

Training and Awareness: Training for auditors matters too. Humans using AI must stay skilled and alert. They must apply judgment. Hence, AI should help and not replace the human decisions.

In short, a solid oversight of AI auditing needs five things: independent checks, stakeholder input, ongoing monitoring, clear reporting, and trained humans. Follow these practices. They keep audits fair, accurate, and trustworthy.

HOW ISO 42001 SETS GUIDES ON AI GOVERNANCE AND AUDITABILITY

AI governance has been all talk and little structure until ISO/IEC 42001:2023 emerged. This new international standard finally gives businesses a solid framework to manage AI responsibly. Consider it as ISO 27001 for artificial intelligence. It sets clear policies, controls, and governance measures that make AI systems transparent, reliable, and accountable. In simple terms, it doesn’t just tell you what to do but how to do it in a repeatable, auditable way.

What it gives audit teams:

- Clear Accountability: Roles/RACI for model ownership and approvals.

- Lifecycle Control: Documented model versions, change control, and rollback.

- Risk and Compliance Mapping: Alignment with applicable laws (e.g., the EU AI Act and GDPR).

- Measurement and Monitoring: KPIs for performance, bias, and drift with thresholds.

- Continuous Improvement: Periodic reviews, corrective actions, and reassessments.

Hence, certification under ISO 42001 is a trust signal from your business. When an audit firm earns it, clients know their AI-driven reviews aren’t black boxes. By meeting such recognized international standards for fairness and transparency, you satisfy the regulators, too.

CONCLUSION

AI is reshaping compliance faster than most businesses can adapt. In such a case, without clear oversight, even the smartest AI auditing tools can go wrong. Thereby risking biased results, failed audits, and regulatory penalties. That’s where CertPro enters the scenario.

At CertPro, we help organizations make sense of this fast-changing landscape through ISO/IEC 42001:2023 assessment and certification services for audit-ready clients. Our experts simplify the complex AI standards into actionable goals. CertPro acts as your independent evaluator, confirming that your controls, governance structure, and documentation meet international standards. Our assessments help you prove compliance maturity, demonstrate ethical AI use, and gain credibility with regulators, partners, and clients.

Startups and growing firms often delay certification, thinking they still have time to act. But waiting only raises the stakes. Each month without clear AI governance increases your exposure to compliance failures, data misuse, and reputational loss.

Ready to validate your AI governance framework? Partner with CertPro today to achieve ISO 42001 certification and show the world you’re audit-ready, compliant, and future-proof.

FAQ

What is AI auditing?

AI auditing is an evaluation of an AI system’s risks, performance, compliance, and documentation across its lifecycle, including data, models, and controls.

Why is independent oversight needed for AI systems?

AI oversight is required to avoid conflicts of interest, validate testing depth, and ensure results are understandable, reproducible, and compliant with applicable laws.

What is AI oversight?

AI oversight is the process of monitoring, reviewing, and validating how artificial intelligence systems make decisions. It ensures fairness, accountability, and compliance with laws and standards, preventing bias, security risks, or unethical outcomes in AI-driven operations.

What are the pillars of AI governance?

The key pillars of AI governance include accountability, transparency, fairness, data integrity, risk management, and continuous monitoring. These principles help organizations build responsible, ethical, and compliant AI systems that meet international standards like ISO/IEC 42001:2023 and regulatory expectations.

Does the EU AI Act require human oversight?

Yes, for high-risk AI systems, IT emphasizes human oversight and risk management. Organizations must map systems to the correct risk category.

How CertPro Conducts an Effective SOC 2 Type II Audit: A CPA-Led Playbook for SaaS

A SOC 2 Type 2 examination results in an independent CPA - issued attestation report on whether your controls are suitably designed and operated effectively over a period of time. It’s based on the AICPA Trust Services Criteria. This requirement is essential, as in...

AUDIT REPORTING BEST PRACTICES FOR ACCURACY & COMPLIANCE

Audit reporting is important for every business organization. For business leaders, clear audit reporting is essential to understand risks, controls, and issues that need remediation. A simple and direct reporting process turns audit work into plain insights that...

AUDITING REPORT FORMAT: BEST PRACTICES FOR CYBERSECURITY COMPLIANCE

If you are a business leader thriving in this era of strict regulations and sophisticated cyberattacks, then you must have realized the importance of compliance and security audits. According to Deloitte, 93% of audit committees rank cybersecurity in their top three...