CROSS-BORDER AI GOVERNANCE FRAMEWORK FOR GLOBAL COMPLIANCE

Companies operating from multiple regions need a clear cross-border AI governance framework to operate responsibly and legally. This type of framework combines multiple rules and gives teams a simple way to manage risk, implement controls, and stay accountable. As companies handle AI across borders, they face a mix of laws and local expectations that halt business growth.

Global businesses have started deploying machine learning tools, automated decisions, and large data models into their core operations. Yet every region, such as the EU, the US, the UK, and other fast-growing nations, differs in AI regulations.

To clarify, they have their own stand on data protection, model clarity, fairness, and responsible use of AI. Such confusion creates legal tension and risks for companies. Furthermore, quick fixes or scattered compliance tasks cannot resolve these issues.

Without one shared governance structure, teams may face rules that point in opposite directions. One region may demand strict limits on data use and require all data to remain inside its borders. Conversely, another region may expect full clarity about how an algorithm works. These mixed demands could slow product releases, create blind spots, and increase the chance of penalties or public criticism.

Therefore, a clear cross-border AI governance framework is the way forward. It brings all oversight into a single system. To elaborate, it sets common rules for all teams and leaves scope for region-specific regulations. This approach cuts risk, keeps AI performance steady, and builds trust with customers, partners, and regulators.

This article explains a simple and practical method to create such a framework. It draws from new regulations, proven industry practices, and real challenges that global companies face today.

Tl; DR:

Concern: Global companies face conflicting AI rules across regions. These differences create legal risk, slow product releases, and increase the chance of penalties. Teams often struggle because they work without one shared system to manage AI safely.

Overview: Developing a cross – border AI governance framework helps companies manage data rules, fairness expectations, and regional demands in a consistent way. It creates one structure for risk control, documentation, and oversight while still allowing room for region – specific requirements.

Solution: A unified AI governance framework built on ISO 42001 equips companies with clear policies, controls, and roles across all regions. It reduces confusion, supports audit readiness, and protects long – term growth. With CertPro’s guidance, businesses can set up this system and achieve reliable global compliance.

WHAT IS AN AI GOVERNANCE FRAMEWORK?

An AI governance framework is the structure that guides organizations in developing and using artificial intelligence. It brings together policies, controls, processes, roles, and technical standards so teams can develop and deploy AI in a safe and consistent way.

The core objectives of an AI governance framework include:

• Safety, so AI systems don’t cause harm or create unpredictable outcomes.

• Fairness, by reducing bias in training data and model outputs.

• Transparency, so teams understand how decisions are made.

• Accountability, by defining who is responsible for each stage of the AI lifecycle.

• Compliance and ethical use, ensuring alignment with laws, industry standards, and internal policies.

The risks of operating without this structure could lead to security issues. Common security issues include:

• Regulatory violations when AI tools are deployed without proper oversight.

• Data misuse or privacy breaches that expose sensitive information.

• Bias or discrimination baked into models and decision processes

• Reputational damage when customers lose trust in AI – based decisions.

• Inconsistent practices across teams or countries can lead to operational slowdowns.

For global businesses, these challenges become even more complex. AI often depends on cross – border data transfers, and each region has its own legal expectations and cultural standards. Hence, without a solid framework, organizations struggle to keep pace with changing rules and stakeholder demands. Therefore, a strategic AI governance framework is mandatory. This helps your firm to scale AI responsibly while protecting your brand, customers, and long – term growth.

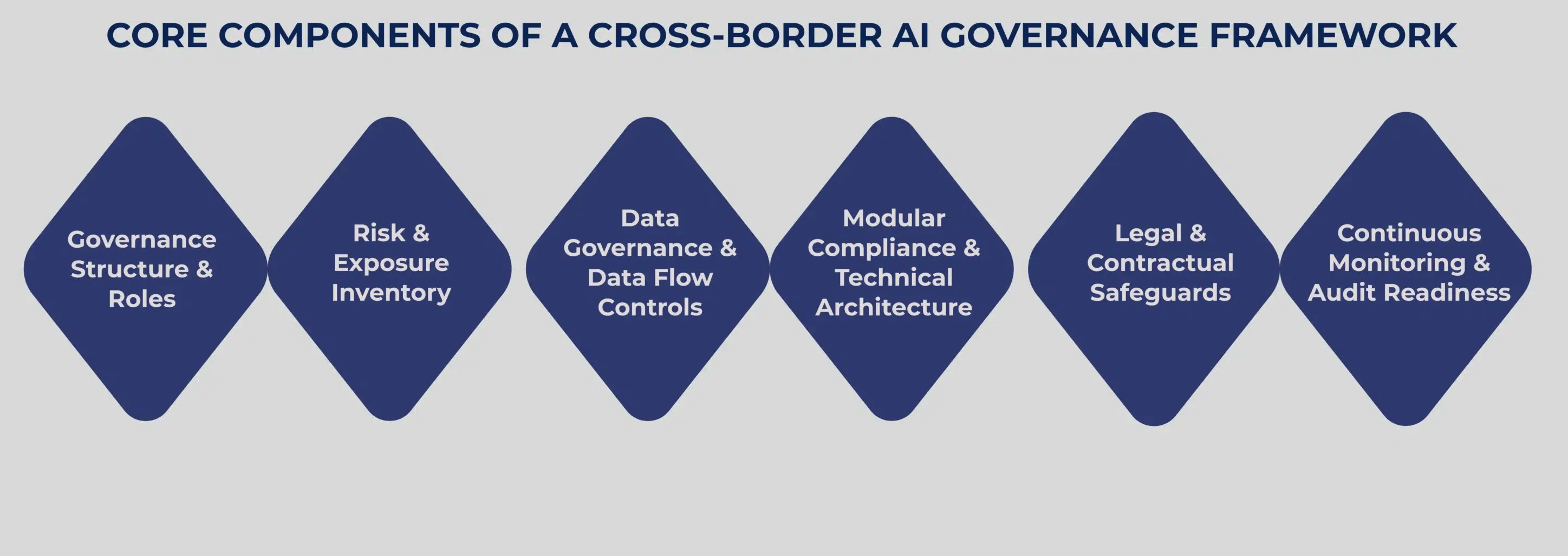

CORE COMPONENTS OF A CROSS – BORDER AI GOVERNANCE FRAMEWORK

Building an effective cross ‑ border AI governance framework requires clear structure, repeatable processes, and the ability to adapt to different legal environments. Let’s understand them in detail in this section.

Governance Structure & Roles

The most pivotal component in an AI governance framework is to build accountability. Accordingly, define who is in charge and responsible for every key step in the process. So you need clear decision – makers, AI stewards, compliance leads, and oversight teams across every region you operate in.

Risk & Exposure Inventory

The next essential component is to understand and acknowledge the assets and data that you own. It is important to be aware of the systems and data you manage, as well as their precise storage locations. Document every AI system, track the data it uses, and note the countries it touches. Furthermore, classify systems based on data sensitivity, user demographics, or potential regulatory impact.

Data Governance & Data Flow Controls

Data rules vary by region. To elaborate, some countries demand that data stay local. Consider using privacy – preserving techniques, like federated learning or differential privacy, to share insights without moving data unlawfully.

Modular Compliance & Technical Architecture

Focus on building flexible AI systems that adapt per region, with configurable compliance modules or region – specific model versions. This flexibility avoids major rewrites in your AI governance framework when new rules appear.

Legal & Contractual Safeguards

Include contracts that cover data retention, legal disclosures, jurisdiction – specific obligations, and vendor responsibilities. These safeguards reduce surprises when regulators ask for proof.

Continuous Monitoring & Audit Readiness

Keep a check on the updates, monitor AI usage, maintain logs, and keep clear audit trails, especially for high – risk applications. As a result, this continuous monitoring allows you to respond to minor issues that turn into major escalations.

CHALLENGES OF CROSS – BORDER AI COMPLIANCE

Cross – border AI deployments bring complex challenges. Different countries have different rules for data privacy, AI use, and liability. In this section, let’s learn about the key challenges and their mitigation strategies.

Conflicting Laws: One of the toughest hurdles is when laws clash across countries. For instance, a data privacy rule in the EU may conflict with a local AI regulation in Asia.

Therefore, the safest approach is to classify all AI systems by risk and data sensitivity. When rules collide, apply the stricter standard or limit the system’s use in high – risk regions.

Data Localization: Some countries require data to stay within borders.

To handle this, you can use privacy – preserving architectures, deploy AI models regionally, or host data in local data centers.

Third – Party Risk: Outsourcing AI services doesn’t remove your liability. Hence, your contracts must clearly state vendor responsibilities, data retention rules, and obligations during legal inquiries.

Dynamic Regulatory Space: AI regulations and standards keep changing. So make governance an ongoing effort. Accordingly, assign someone to watch regulatory updates and adapt policies quickly.

Cross – Jurisdictional Liability:

Prepare for unexpected legal or regulatory decisions. Maintain transparency in your data handling and documenting processes, and maintain explainable AI logs. Clear records are proof of your responsibility and reduce penalties.

By combining these strategies, companies can deploy AI across borders safely and securely.

BEST PRACTICES AND RECOMMENDED AI GOVERNANCE FRAMEWORK MODELS

Organizations that work across regions need reliable ways to manage different AI regulations. Several proven AI governance framework models could bring structure, reduce repeated work, and provide your teams with a standard way to manage risk associated with cross – border AI compliance.

Unified Control Framework

The Unified Control Framework, called UCF, offers a simple way to handle multiple global rules. It gathers thousands of requirements and organizes them into one clear set of controls. Thereby helping teams to operate under a unified standard.

Key points:

- UCF provides one control map for global laws.

- Teams connect their identified risks to this map and then apply the same baseline across all areas.

- This approach reduces confusion and facilitates faster audits.

Multi – Layer Governance Framework

Another helpful AI governance framework model is a multi – layer or five – layer approach. It connects broad ideas to daily work. For example, values like fairness, clarity, and accountability get embedded in each layer and become a culture of daily workflow.

Key points:

- Leadership proposes high – level AI principles.

- These principles can be categorized into processes, actions, and tests.

- Teams know exactly how to follow these values.

Hybrid Governance Approach

In the modern era, businesses have started to blend AI oversight with their existing risk and compliance systems. Thus, integrating AI into their overall compliance and risk management program. It also reduces cost because teams already understand these systems.

Key points:

- AI oversight fits into regular risk processes.

- Clear and familiar workflows.

- Redundant reviews decrease, leading to faster adoption.

Lifecycle Governance

Lifecycle governance tracks AI from the early design phase through its deployment and final retirement. Furthermore, controls appear at every stage so teams can manage risks even when models change or run in new regions.

Key points:

- Controls are applied during the design, development, testing, and launch phases.

- Monitoring continues even after deployment.

- Retirement steps prevent unused models from generating hidden risks.

Together, these models give companies flexible and scalable ways to create a cross – border AI governance framework that meets global rules and supports smooth operations

CONCLUSION

A clear cross – border AI governance framework is essential for safe and lawful operations in 2025. Rules are growing fast across the world, so companies need to adjust at the same pace. The EU AI Act, several US sector rules, and many new Asian standards all demand careful and steady practices. Because of the gaps created by these varying regulations, every business needs an AI governance approach that works in many places and still supports quick growth.

ISO/IEC 42001:2023 AI Management System offers a practical way to meet that goal. It provides a clear structure that brings technical controls, daily procedures, and ethical duties into one system. It also creates clear guidance in a world with many different expectations. As a result, teams reduce confusion, improve audit preparation, and show regulators and customers that their AI programs follow responsible practices.

CertPro guides organizations through the full process, from early readiness checks to final certification. Our services cover impact reviews, documentation checks, control reviews, and certification for audit – ready clients. This method gives your teams clear direction at each step.

If your business builds or uses AI in several regions, this is the right time to set up a unified AI governance framework. Connect with CertPro and begin your ISO 42001 journey today.

FAQ

What is an example of AI governance?

An example of AI governance is a clear set of rules that guide how a company builds, tests, and uses AI systems. It covers data use, fairness checks, risk controls, and monitoring to support safe and responsible outcomes.

Which framework is used for AI governance best practices?

ISO 42001 is the leading framework for AI governance best practices. It provides businesses a simple management structure to plan, control, and monitor AI systems. It also supports responsible development, trusted decision – making, and ongoing risk control.

What is cross - border AI compliance?

Cross – border AI compliance means following different rules in each region where an AI system operates. It covers data use, fairness, model controls, and documentation. It also helps companies reduce legal risk and support safe global deployment.

What are the key principles of AI governance?

Key principles include accountability, transparency, data quality, fairness, and ongoing risk control. These principles help teams monitor AI behavior, prevent harm, and support trust. They also guide safe design, clear documentation, and responsible deployment across regions.

How does ISO 42001 compliance help businesses meet cross - border AI compliance?

ISO 42001 helps companies manage different regional rules through one unified system. It creates clear controls for risk, data handling, testing, and monitoring. This approach reduces confusion, supports faster audits, and improves trust in global AI operations.