SHADOW AI: DETECTION, RISK CONTROLS AND A PLAYBOOK FOR SAFE ENTERPRISE AI

Imagine that you are a busy team member rushing to meet a deadline. To complete the task, you have copied a chunk of sensitive project data and pasted it into a generative AI chatbot to “speed things up.” And as expected, you have also finished the tasks. The whole process sounds harmless and efficient, right?

However, it may take some time to understand that your company’s confidential information has permanently exited your security boundary. This small action captures the crux of the growing shadow AI and generative AI risks inside modern enterprises. According to KPMG’s recent survey, more than 44% of employees have used AI tools in contradiction to the policies and guidelines, indicating a significant prevalence of shadow AI in organizations. In simple words, the global survey states that shadow AI is not a theoretical risk anymore but a present and growing threat.

Shadow AI refers to the use of artificial intelligence tools that employees adopt on their own without approval from IT or security teams. To elaborate, they might be experimenting, trying to boost productivity, or simply curious. But because these tools sit outside your organization’s control, every prompt, document, or snippet of code they upload creates exposure you can’t see and can’t track. CISOs, compliance heads, and IT directors, who are responsible for safeguarding their organization’s data, are struggling to get full visibility into this issue.

These unapproved AI interactions can lead to accidental data leaks, noncompliance with regulations like GDPR and HIPAA, loss of intellectual property, and long – term damage to trust.

Hence, this article aims to give you clarity on what is shadow AI and a sense of control while using generative AI. You’ll learn how to detect shadow AI inside your environment, what risk controls actually work, and how to build a practical playbook that keeps innovation alive while protecting your data. The goal is to help you create an enterprise AI environment that’s safe, predictable, and aligned with your responsibility as a leader.

Tl; DR:

Concern: Shadow AI is rising inside companies because employees use public AI tools to finish tasks faster. Even though it feels harmless at first, it could quietly expose sensitive data and create gaps in compliance, security, and oversight. Furthermore, the leaders struggle because these actions stay hidden until something goes wrong.

Overview: Shadow AI grows when teams try to improve productivity without approved tools or clear rules. It leads to leaks, audit failures, and loss of intellectual property. Moving forward, the shadow AI risks spread across departments and become harder to manage over time. Strong visibility, simple policies, and steady monitoring help reduce this risk.

Solution: A structured AI governance system creates control and trust. ISO/IEC 42001:2023 (AIMS) gives companies a clear way to manage AI use and close gaps that shadow AI creates. CertPro supports this journey with ISO 42001 assessment and certification services for audit – ready clients. Our team helps you understand your current risks, build responsible AI practices, and ensure that your AI governance model is ready for certification.

WHAT IS SHADOW AI AND WHY DOES IT MATTER NOW?

Shadow AI is the use of AI tools inside a company without approval or oversight from IT, security, or compliance teams. Most often, it grows quietly in the background. Employees try new AI tools on their own to increase productivity and efficiency in their daily workflows. In simple words, they want quick results and simple ways to finish tough tasks. Most leaders confuse shadow AI with shadow IT. But, they are different. Let’s understand them in a better way using the following definitions.

- Shadow IT: Unapproved software, storage, or services (for example, personal file – sharing tools or unsanctioned SaaS apps).

- Shadow AI: Unapproved AI tools and models, where employees paste prompts, data, source code, documents, and even customer details into external AI systems they barely understand.

Both create blind spots, but Shadow AI carries a sharper set of risks because the data goes straight into external systems.

Why Shadow AI Matters Now

Shadow AI matters for four main reasons:

- Employees paste sensitive files, contracts, logs, and designs into public AI tools without any formal record.

- Regulatory standards like GDPR, HIPAA, and PCI DSS expect clear control over data storage and collection processes. But shadow AI bypasses those controls.

- Research indicates that the workforce is rapidly adopting AI, with a significant portion of employees using these tools without understanding their safety or legality.

- One leak or one wrong decision based on unreviewed AI output is enough to damage brand trust and trigger regulatory investigations.

Recent studies paint a worrying picture. According to PwC’s 2025 Global Workforce Hopes & Fears Survey, only about 14% of workers use generative AI daily, and just 54% used AI at work at all over the past year. Meanwhile, a KPMG study found that up to 58% of employees are using AI productivity tools every day, despite a lack of clear policies in most organizations. Therefore, the problem is no longer limited to just one careless employee. This issue is occurring across departments, where even those responsible for protecting the company are using unsanctioned tools. They try to solve small tasks fast, but they expose the company in the process.

Such behavior creates a quiet but growing risk. Hence, shadow AI is spreading fast, and without clear controls, it welcomes data leaks, lost IP (Intellectual Property), and regulatory trouble.

WHAT ARE THE RISKS OF USING SHADOW AI?

Without having clarity on what is shadow AI, the employees are allowing it to sneak into daily work through quick fixes and rushed tasks. It feels helpful at first, yet it quietly exposes data, weakens controls, and creates risks that leaders never see coming. In this section, let’s understand the different kinds of shadow AI risks.

Data Exposure and Leakage:

Shadow AI risks often start with small habits. Your employee might paste a customer contract into a public chatbot to draft a summary. Another might upload a product roadmap to get quick edits. These actions feel harmless in the moment, yet they push sensitive material into systems outside company control.

- Prompt – based leaks happen when staff share internal content with public tools. For example, the sales teams often paste pricing sheets, and engineers sometimes paste error logs with system details.

- Model training risk appears when tools use uploaded data to train or refine their models. This means private information can influence future outputs for unknown users.

Compliance and Regulatory Violations

Unapproved AI pushes companies into legal danger without warning.

- Rules like GDPR, HIPAA, and PCI – DSS expect strict control over personal and regulated data. But public AI tools rarely meet these standards. To elaborate, uploading personal data to unsanctioned AI tools can violate GDPR principles, such as purpose limitation and data minimization. Plus, it also violates data processing agreements, Business Associate Agreements (BAAs), or PCI DSS controls that your approved systems already enforce.

- Many shadow AI tools lack logs, audit trails, and DPIAs (Data Protection Impact Assessments), so you can’t prove what data was shared or how it was processed. This puts teams in a tough spot during audits or investigations.

Intellectual Property Risks

Shadow AI can quietly erode a company’s competitive edge.

- Prompt leakage happens when someone enters source code or designs into a public model. Attackers or competitors might recreate patterns or logic.

- Contract and licensing issues arise when employees click through AI tool terms that hand over rights to text or uploads.

Model Integrity and Drift

Teams may trust AI outputs without checking accuracy.

- Without version control or review, models shift over time.

- This leads to biased or incorrect answers that can influence product decisions, risk assessments, or customer responses.

Credential and Identity Risks

Employees sometimes paste login details or internal URLs for quick troubleshooting.

- Personal accounts or free tools create unauthorized pathways into company data.

Governance Blind Spots

Shadow AI grows when no one owns their generative AI risks.

- Different teams adopt tools on their own.

- Nevertheless, the policies stay inconsistent, which leaves leaders blind to real exposure. In practice, shadow AI prevents consistent enforcement of AI policies and controls, weakening your overall AI governance and leaving critical gaps in oversight.

TOOLS AND TECHNIQUES TO DETECT SHADOW AI TOOLS

Shadow AI detection could feel tricky, especially when teams use AI tools without permission. Yet you can still build clear visibility if you rely on the right signals. Proceeding ahead, you must treat the task as a continuous practice rather than an isolated or one – time event.

Visibility Mechanisms

- Monitor data flow: Network logs, proxy logs and DNS (Domain name System) queries reveal traffic to public AI platforms.

- Watch endpoints: Modern endpoint tools can flag browser tabs or extensions talking to AI sites. This procedure helps you identify silent usage from unmanaged laptops.

Data Loss Prevention (DLP) Adaptations

- AI – aware rules: Update DLP to detect prompts that contain sensitive data. Block or alert when users paste protected text into unknown AI sites.

- Browser controls: Add guardrails that stop confidential information from reaching public AI interfaces. This helps sales and support teams that process large volumes of data, and it also supports better data protection and AI governance across the organization.

Access and Identity Controls

- IAM integration: Integrate AI tools with solid security controls like Identity and Access Management (IAM). Accordingly, grant more freedom to roles that need it, like R&D or engineering. Meanwhile, restrict high – risk roles to vetted platforms.

- Personal account risks: Track logins that use personal AI accounts. Encourage or require enterprise – managed accounts to avoid data exposure.

Audit and Logging

- Track inputs and outputs: Keep a secure log of prompts and responses for investigations.

- Version tracking: Record which AI model or version generated an output. This information helps during audits and dispute checks.

Threat Hunting and Anomaly Detection

- Behavior baselines: Build a baseline of normal AI usage and alert when someone exceeds it.

- SIEM (Security Information and Event Management), SASE (Secure Access Service Edge) and SSE (Security Service Edge) integrations. Many platforms now recognize AI traffic patterns, which helps security teams catch misuse faster.

Thus, by following the above – discussed techniques, businesses could develop a culture that excels in shadow AI detection.

A PRACTICAL PLAYBOOK FOR ENTERPRISE SHADOW AI

Most teams want to use AI because it helps them work faster. Yet this pull often creates blind spots. So, a clear playbook is necessary to help leaders guide that energy without blocking innovation.

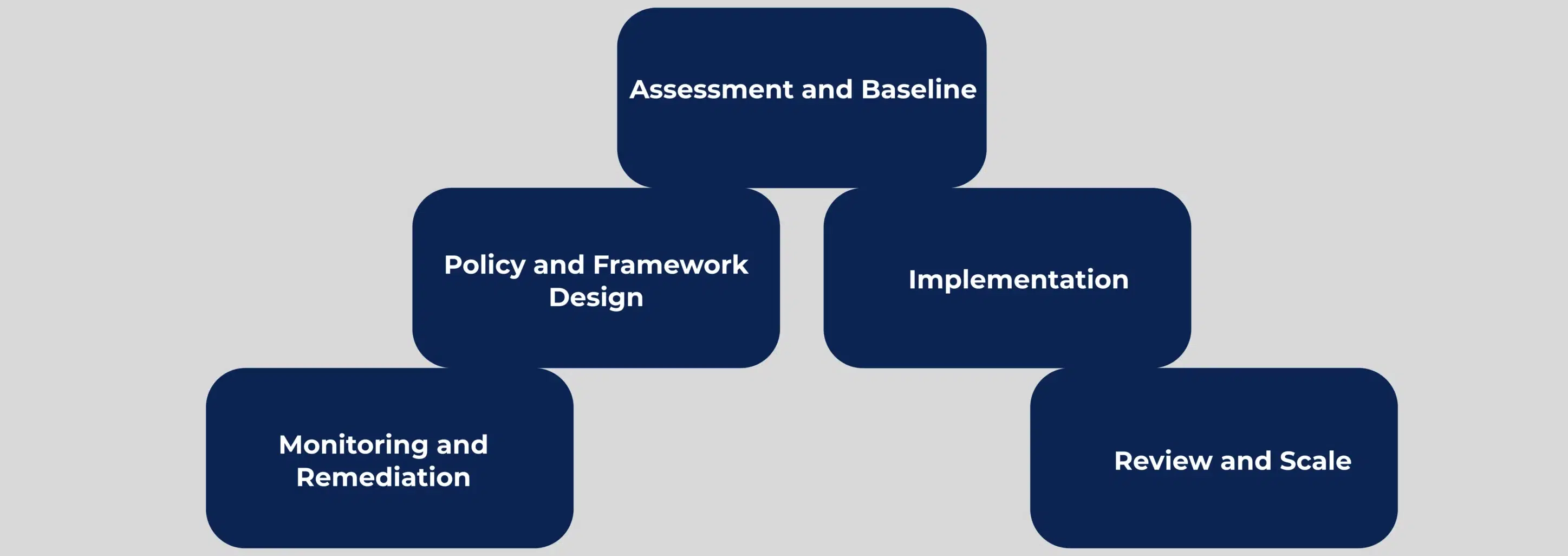

Assessment and Baseline: Start with an honest look at what’s already happening. Map every AI tool in use, both approved and unapproved. Many firms discover marketing teams using free writing tools, developers testing code assistants, or analysts feeding sensitive data into public chatbots. After listing these tools, group them by risk. Moving forward, concentrate on data exposure, legal responsibilities, and the frequency of each tool’s use. This provides you with a realistic baseline.

Policy and Framework Design: Next, set the rules. Form a small governance group with IT, security, legal, and business leads. Write a simple policy that people can follow without confusion. Then build a control framework that explains who can use what, how data should be handled, and how monitoring will work.

Implementation: Put these controls into practice. Use Data Loss Prevention (DLP), proxies, and SIEM to detect unsafe behavior. Also, provide approved AI platforms so teams avoid untrusted tools. Moving forward, link all access to IAM so permissions stay aligned with real tasks. In this context, it is necessary to train your people with real examples so the guidance feels easy to use. This helps build better data protection and AI discipline across the organization.

Monitoring and Remediation: Watch patterns, catch violations early, and fix them quickly. In this context, use alerts, dashboards, and periodic reports to keep leaders informed.

Review and Scale: Finally, treat this model as a living and ongoing system. Review incidents, adjust the playbook, and expand governance as new AI models and agentic workflows enter the workplace. This approach keeps the program steady as AI adoption grows.

HOW ISO/IEC 42001:2023 HELPS YOU CONTROL SHADOW AI

ISO/IEC 42001:2023 is the world’s first Artificial Intelligence Management System (AIMS) standard. It defines requirements for establishing, implementing, maintaining, and continually improving an AI management system.

For organizations that worry about shadow AI, ISO/IEC 42001 offers a structured way to close the gaps:

- It connects AI governance, risk management, ethics, and transparency in one framework.

- The ISO AI standards clearly define roles and responsibilities for AI, including who owns AI risk in each part of the business.

- Furthermore, it encourages risk – based controls for AI use cases, including data protection, model lifecycle, and monitoring.

- It gives you a clear and consistent way to show regulators, customers, and partners that you follow the rules and act responsibly.

Startups and fast – moving companies benefit especially because the standard gives them clarity and structure before shadow AI risk escalates.

CONCLUSION

Shadow AI is not growing because people are careless. It’s growing because teams feel pressure to move fast, and they don’t have a safe system that supports that speed. This gap creates real trouble for leaders who must protect data, meet regulations, and still keep the business moving. That’s why this moment calls for steady, practical governance instead of last – minute fixes.

ISO/IEC 42001:2023 AIMS (Artificial Intelligence Management System) gives you that structure. It helps you close the blind spots that shadow AI exploits. It also gives you a repeatable way to control AI use, prove compliance, and guide your teams without slowing them down. Startups and fast – moving companies benefit the most because the standard gives them clarity before AI chaos sets in.

This is where CertPro steps in. We help you assess your current AI environment, uncover the hidden risks, and build the controls you need for safe use. Our team guides you through the ISO AI certification process with our ISO 42001 assessment and certification services. We provide complete guidance through documentation review, risk assessment, and impact assessment.

If you want to reduce your exposure and build an AI management system that feels safe and predictable, then connect with CertPro today. We’ll help you turn AI from an uncontrollable liability into an understandable strength for your business.

FAQ

What is shadow AI?

Shadow AI refers to employees using AI tools without approval from IT or security teams. It usually happens when staff turn to public chatbots or apps for quick tasks, which creates hidden data exposure and weakens enterprise control.

What are the risks of shadow AI?

Shadow AI creates data leaks, compliance violations, loss of intellectual property, and unreliable outputs. Moreover, leaders are unable to track shared information, review activity, or demonstrate the safe handling of sensitive data due to the absence of company oversight over unapproved tools.

What is an example of shadow AI?

A common example is an employee pasting customer data or source code into a public chatbot to finish work faster. The task feels quick and harmless, yet the sensitive information leaves the company’s security boundary without any monitoring.

How does ISO 42001 help control shadow AI?

ISO 42001 gives companies a structured approach for managing AI risks. It helps define roles, controls, and monitoring practices that reduce blind spots and make AI use safer, predictable, and ready for certification.

How do startups handle shadow AI with limited security resources?

Startups can begin with simple steps. These steps can include the establishment of an AI usage policy, the creation of a basic inventory, the restriction of access to unsafe tools, and the selection of a single approved AI platform. This approach creates control without the need for large security teams.

CONTINUOUS INTEGRATION FOR DEVSECOPS: HOW AUDITORS EVALUATE SECURITY CONTROLS

Identity Verification in SOC 2 and ISO 27001: Audit Expectations for User Onboarding

ETHICAL HACKING FOR AUDIT ASSURANCE: STRENGTHENING SOC 2, ISO 27001, AND HIPAA COMPLIANCE

Ethical Hacking For Control Effectiveness. Uncover Gaps And Strengthen Audit Evidence For SOC 2, ISO 27001, And HIPAA Compliance Reviews.