The AI revolution is already underway, transforming industries through automation, enhanced decision-making, and improved customer experiences. However, regulators are taking note of all these changes. Across the globe, governments are drafting strict laws and regulations to control how AI systems operate. The EU AI Act is one of the biggest examples, setting tough rules for high-risk AI applications. Similar frameworks are emerging in other regions, too, and adhering to them is necessary for running a business safely. ISO 42001 is one such inevitable standard for AI governance that businesses can’t ignore.

The ISO/IEC 42001:2023 is the first global standard designed specifically for AI governance. Unlike generic compliance frameworks, this one focuses on how organizations build, deploy, and monitor AI responsibly. It helps companies create clear processes to manage risks like bias, privacy breaches, and lack of transparency that can damage reputation and lead to huge regulatory fines. Why does this initiative matter now? Because non-compliance is already a present-day risk, organizations must address it proactively through a robust AI Management System (AIMS). For example, an AI-driven recruitment tool flagged for discrimination could land your company in a legal battle. Likewise, a chatbot mishandling sensitive data could trigger GDPR penalties. These are no longer hypothetical risks; they’re real cases hitting headlines today.

By adopting ISO 42001, businesses get a structured and certifiable way to prove their AI systems are ethical, safe, and compliant. Hence, the ISO 42001 certification is about building trust, reducing risk, and staying competitive in a market that demands both innovation and accountability. When regulators, partners, and customers ask, “Is your AI trustworthy?” ISO 42001 helps you to answer it confidently with proof.

Tl; DR:

Concern: AI is changing how businesses work, but strict laws like the EU AI Act are coming fast. Ignoring them can lead to big fines, legal trouble, and a damaged reputation.

Overview: ISO 42001 is the world’s first standard for safe and responsible AI use. It helps you set clear policies, manage risks, and monitor AI systems continuously. It works for all industries and covers key areas like fairness, security, and ethics at every stage of AI.

Solution: Getting ISO 42001 certified shows your AI is compliant and trustworthy. It also fits perfectly with other standards like ISO 27001 and GDPR. CertPro makes this easy with quick audits, gap checks, and full support for audits and assessments. So, don’t wait until an issue or incident occurs. Partner with CertPro now and build AI systems that regulators and customers can trust.

WHAT IS ISO 42001?

ISO 42001 is the world’s first international standard for managing AI responsibly. It was officially published in December 2023 as ISO/IEC 42001:2023. Furthermore, it gives organizations a clear framework to establish, run, and improve an Artificial Intelligence Management System (AIMS).

So, what difference does it make in practice? Implementing the ISO/IEC 42001 AI Management System (AIMS) in a business follows the establishment of structured processes that regulate the design, deployment, and monitoring of AI systems. To clarify, the goal behind obtaining ISO 42001 certification is simple. It helps in reducing risks like bias, misuse of data, and unpredictable behavior while keeping the spirit of innovation alive. Unlike tech guidelines that sit on a shelf, ISO 42001 is practical and flexible. It is suitable for all industries, from healthcare using AI for diagnostics to banks relying on AI for fraud detection. So, any sector where AI decisions affect people or critical operations can use this standard to stay accountable and compliant.

To add on, the heart of ISO 42001 is the Plan-Do-Check-Act (PDCA) cycle. This approach ensures your AI governance is not something you perform once in a while. Moreover, it’s a continuous commitment. You plan your policies, implement them, monitor the results, and improve them continuously. For businesses under rising regulatory pressure, such dedication means no more panic when an incident occurs. Instead, you’ve got a system that is responsible for monitoring risks. Such dedication is what your customers, regulators, and partners are expecting you to implement to uphold the ethics of AI.

WHY ISO 42001 MATTERS FOR REGULATORY AND ETHICAL AI COMPLIANCE

Organizations can achieve AI governance in a practical and measurable way with the unique structure of ISO 42001. To begin with, clauses 1-3 explain the standard’s scope and key terms, ensuring that everyone speaks the same language. The real work begins with clauses 4 to 10 that cover the essential requirements: understanding your AI context, identifying risks, planning controls, running operations, and reviewing performance. Additionally, the annexes make it even more useful. Annex A lists specific AI controls, and Annex B offers guidance on how to implement them in real life. Whereas Annex C helps you identify common AI risk sources, while Annex D tailors considerations for different sectors.

Transparency, accountability, fairness, privacy, and continuous improvement form the foundation of ISO 42001. These are the core principles of the ethics of AI. Plus, your regulators, partners, and customers expect you to have these values. Therefore, getting these right helps you stay trusted in this AI-driven world. Moreover, ISO 42001 assists in tackling the real-world issues like bias, safety, explainability, and data governance. It converts them from problems to operational requirements throughout the AI lifecycle. ISO 42001 certification also brings third-party oversight into the scope. To clarify, if you’re using a third-party model or a data source, they must meet your compliance and governance standards, too. This way, risks don’t sneak in through your partners.

As ISO 42001 shares DNA with other management-system standards, it harmonizes naturally with ISO 27001 for security and ISO 31000 for risk. That means you can reuse policies, roles, and review processes instead of inventing new ones from scratch. This connection makes ISO 42001 a useful link to regulatory responsibilities, especially the EU AI Act and GDPR. So, if an auditor inquires about your risk management process, you can point to mapped controls, approvals, and monitoring. Hence, the ISO 42001 certification is your documented governance, traceable decisions, and auditable records that build trust with boards, regulators, and customers.

KEY STEPS TO IMPLEMENT ISO 42001 IN YOUR ORGANIZATION

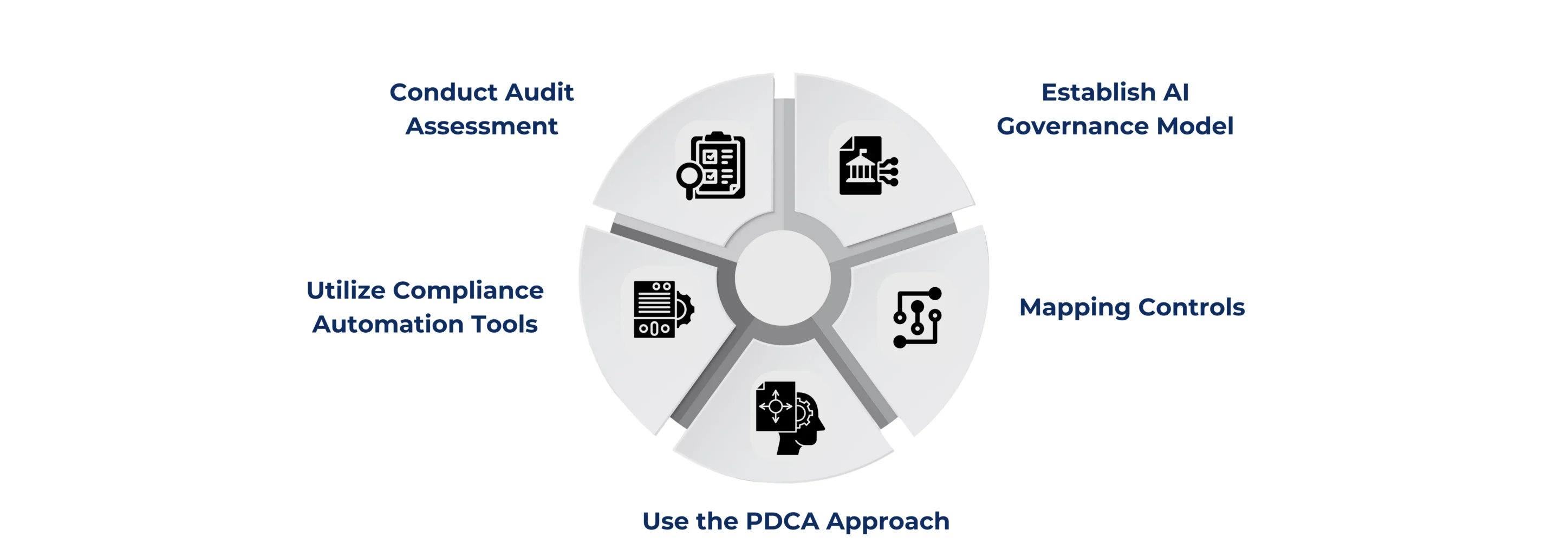

Effective AI management requires clear steps and structured planning. Therefore, while implementing ISO 42001 standards, businesses could use the following steps.

Conduct Audit Assessment: Start with a quick ISO 42001 audit readiness assessment. List your AI use cases, data flows, and third-party models. Then align these with your existing frameworks: ISO 27001, GDPR compliance, and the enterprise risk management procedure. Consequently, perform a gap analysis, assign roles, document processes, and set a timeline for the process’s completion.

Establish AI Governance Model: Start by naming a person who takes accountability for the process. Form a small AI risk council with legal, security, data, product, and operations teams. Assign clear roles to team members, such as approving use cases, reviewing model risks, and tracking fixes. Furthermore, communicate decisions clearly, ensuring that everyone on the team understands their responsibilities in managing the ethics of AI.

Mapping Controls: Now, map controls to risks by running an AI risk assessment for each system. Choose treatments (avoid, reduce, transfer, accept). Then, compare your choices to Annex A (AI controls requirements) and document the reasons for what you implement or exclude.

Use the PDCA Approach: Deploy your AI systems with ISO 42001 safety controls, test their performance, and monitor key metrics like bias, data quality, and uptime. Document issues, review them through audits, and continuously improve using the PDCA cycle to stay compliant and updated.

Utilize Compliance Automation Tools: To make your ISO 42001 certification process easier, use smart tools instead of manual work. Accordingly, cloud platforms and Governance, Risk, and Compliance (GRC) tools can automate your critical tasks. The goal is to move away from scattered spreadsheets and create simple, repeatable processes that save time and reduce mistakes.

WHERE ISO 42001 STANDS IN THE CONTEXT OF GLOBAL AI STANDARDS

ISO 42001 is a global standard that gives businesses a structured way to responsible AI management. It is a full system with policies, roles, and processes that cover every stage of AI use. The standard is important because regulators now want proof of how you control AI risks.

For example, the EU AI Act classifies AI into four risk levels: unacceptable (banned), high, limited, and minimal. High-risk AI, like facial recognition or healthcare tools, comes with strict rules, like risk assessments, data quality checks, human oversight, security, and ongoing monitoring. Hence, obtaining ISO 42001 certification helps you put these requirements into practice and achieve AI compliance. Moreover, the academic world considers ISO 42001 to be the most complete framework for managing AI systems across their lifecycle.

ISO 42001 is also part of a bigger family of AI standards developed by ISO/IEC JTC 1/SC 42. This certification ensures that your AI systems align well with related standards, such as those for AI risk and terminology. Hence, ISO 42001 helps you stay ahead of regulations, reduce compliance headaches, and build trustworthy AI systems by upholding the principles and ethics of AI

PARTNER WITH CERTPRO AND MAKE AI COMPLIANCE YOUR GROWTH STRATEGY

AI is increasingly influencing every critical decision made in the business market. But with this opportunity also comes the responsibility to ensure ethical AI management. New regulations like the EU AI Act make compliance mandatory, and the cost of non-compliance could mean heavy fines, reputational damage, and even the loss of customer trust. For startups and enterprises alike, ignoring ISO 42001 today could lead to expensive fixes tomorrow.

This is where CertPro steps in as your trusted ISO 42001 assessment and certification partner for audit-ready organizations. We focus on verifying and certifying your AI management systems against international standards. Our process is designed to be clear, efficient, and aligned with your business objectives. With CertPro, you get:

- Expert-led assessments built around your existing compliance framework.

- Alignment with related standards like ISO 27001 and GDPR for streamlined certification.

- Faster audit timelines through the collaboration with industry-leading compliance automation tools.

Don’t wait for compliance issues to harm your progress. Let CertPro help you build trust, reduce risk, and achieve certification without confusion. Connect with us today to begin your ISO 42001 audit and certification journey.

FAQ

Who needs ISO 42001?

Any business using AI should consider ISO 42001. It ensures safe, ethical, and compliant AI operations. It’s vital for tech firms, startups, healthcare, finance, and enterprises aiming for trust, transparency, and regulatory alignment.

Is it mandatory to be ISO 42001 certified?

ISO 42001 certification isn’t mandatory yet, but regulations like the EU AI Act make compliance essential. Certification proves you manage AI risks responsibly, avoid penalties, and build trust with customers and regulators worldwide.

What is the difference between ISO 42001 and ISO 9001?

ISO 42001 focuses on responsible AI governance, covering ethics, transparency, and risk controls. ISO 9001 deals with quality management across business processes. One ensures ethical AI use, while the other ensures product and service quality.

What are the five ethics of AI?

The five key AI ethics are fairness, transparency, accountability, privacy, and safety. These principles guide responsible AI design and use, reducing bias and risk while ensuring trust and compliance with global regulations.

What is AI data management?

AI data management means collecting, storing, and organizing data used by AI systems. It ensures data quality, security, and compliance, helping AI models work accurately and ethically without violating privacy or regulations.

About the Author

Abhijith Rajesh

Abhijith Rajesh is an Associate Manager at CertPro, specializing in ISO 27001, SOC2, GDPR, and other Information Security Compliance standards. He leads a dedicated team, ensuring the delivery of top-tier information security solutions. Abhijith excels in managing projects, optimizing security frameworks, and guiding clients through the complexities of the ever-evolving threat landscape.

AUTOMATED DATA PROCESSING FOR COMPLIANCE: STREAMLINING AUDIT EVIDENCE COLLECTION

COMPLIANCE AND GOVERNANCE GUIDE: DATA GOVERNANCE VS AI GOVERNANCE FOR ENTERPRISES

What is Regulatory Change Management?

Learn What Regulatory Change Management Is, Its Importance, and How to Build a Process That Keeps Your Business Compliant and Audit-Ready.